Voiceflow Leverages Generative AI ‘Prompt Chaining’ to Accelerate Conversational AI App Development

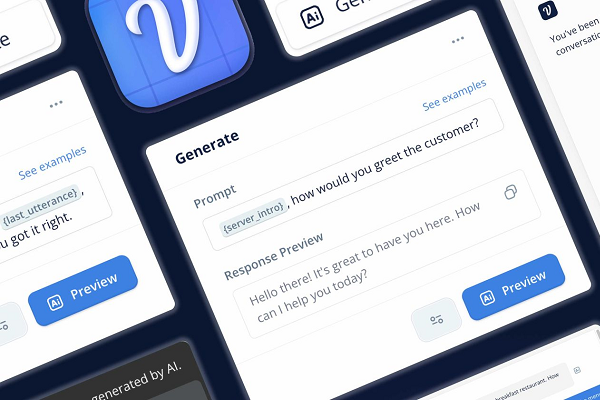

Chat and voice AI app design startup Voiceflow has augmented its platform with the ability to connect multiple prompts to generative AI engines. This ‘prompt chaining’ harnesses the large language models underlying the AI to accelerate the design and release of even very elaborate and sophisticated conversational AI assistants.

Chat and voice AI app design startup Voiceflow has augmented its platform with the ability to connect multiple prompts to generative AI engines. This ‘prompt chaining’ harnesses the large language models underlying the AI to accelerate the design and release of even very elaborate and sophisticated conversational AI assistants.

VoiceFlow Prompt Chain

Voiceflow is known as a hub for developers looking to create voice and text-based conversational AI apps to publish on a wide range of platforms and channels. The recent explosion of interest in generative AI inspired Voiceflow to begin experimenting with the technology. In January, the company released the new Generate Step feature, which enabled developers to link their app to OpenAI’s GPT-3 large language model either to produce content or as a dynamic conversational tool. Generative AI can also take up some of the more tedious design facets like re-prompts and generating utterances and Voiceflow is aiming to halve the time needed for designing apps before user testing. Prompt Chaining builds on those generative AI experiments.

“Prompt Chaining is the ability to create dynamic conversations by connecting powerful model responses together. LLMs have a great ability to adjust based on context, so connecting multiple prompts to form a series of interactions where LLMs capture user content, generate responses, and make decisions is a great way to prototype conversations. Voiceflow machine learning lead Denys Linkov told Voicebot in an interview. “For Voiceflow, we see it as a great way to prototype and build intricate projects quickly. It’s another tool that you have within the platform to design the best conversational ai experience.”

The idea is to make a chatbot or voice assistant more responsive and less static by connecting different kinds of prompts. Linkov described in a blog post how one such chain could include an intent, conversation classification, entity capture,re-prompting, and persona prompts prompt. For instance, if a restaurant wanted a voice AI, the prompt chain could get the assistant to find out if the customer wanted to eat, determine when they ask a question, fill out their order for the kitchen, follow up by asking about any missing information about the order, and have the AI converse in the style of a fictional character all at the same time.

Flow Rush

The release of Voiceflow’s generative AI tools in January has already led to a measurable uptick in conversational design speed, as there’s less frequent writer’s block, according to Linkov. Developers can also test and prototype more quickly by asking the AI to define the many hundreds or thousands of edge cases that developers had to far more limited tools to compose beforehand. The way designers were playing LLMs within Voiceflow’s system encouraged Voiceflow to deepen those connections, and prompt chaining was one of the results. Linkov described it as a “new framework” for creating assistants, which is why prompt chaining and other new development steps have their own section of the platform.

“As models continue to get faster, cheaper, and better, chaining will be more common. It becomes another technique you can use to handle ambiguous use cases,” Linkov said. “What excites me is a future of having a catalog of models for specific tasks or a library of prebuilt task components that you can connect together to resilient, fast, and easy-to-use assistants.”

Follow @voicebotaiFollow @erichschwartz

Voiceflow Closes $20M Funding Round to Extend Conversational AI Platform

Microsoft 365 Copilot Infuses Generative AI Into Every Office App

Generative AI Startup Adept Raises $350M for Text-to-Software Commands Assistant