OpenAI Debuts GPT-4 Multi-Modal Generative AI Model With a Sense of Humor

OpenAI has officially revealed the new GPT-4 large language model, upgrading the engine underlying ChatGPT and other generative AI applications. GPT-4 also offers multi-modal input options and can simultaneously process text and images, providing a response incorporating both kinds of input.

GPT-4 Galore

GPT-4 is available through ChatGPT Plus, the premium subscription version of the AI chatbot. Developers who want to incorporate GPT-4 can sign up for the API waitlist. The LLM is priced for enterprise customers at $0.03 for 1,000 prompt tokens, the information input by that user, and $0.06 for 1,000 completion tokens, the AI’s response. Tokens are raw text components, and 1,000 tokens equal approximately 750 words.

GPT-4 is available through ChatGPT Plus, the premium subscription version of the AI chatbot. Developers who want to incorporate GPT-4 can sign up for the API waitlist. The LLM is priced for enterprise customers at $0.03 for 1,000 prompt tokens, the information input by that user, and $0.06 for 1,000 completion tokens, the AI’s response. Tokens are raw text components, and 1,000 tokens equal approximately 750 words.

Apps built with GPT-4 will accept both text and images, as opposed to the text-only setup of GPT-3 and GPT-3.5. OpenAI trained GPT-4 for six months using public and licensed databases. The company claims the new model operates at a level comparable to human understanding in some contexts. Microsoft revealed that the Prometheus LLM underlying its new Bing AI chat is also GPT-4, as had been previously rumored. The new Duolingo generative AI features are also built with GPT-4.

“It is more creative than previous models, it hallucinates significantly less, and it is less biased. It can pass a bar exam and score a 5 on several AP exams,” OpenAI CEO Sam Altman explained on Twitter. “We have had the initial training of GPT-4 done for quite awhile, but it’s taken us a long time and a lot of work to feel ready to release it. We hope you enjoy it and we really appreciate feedback on its shortcomings.”

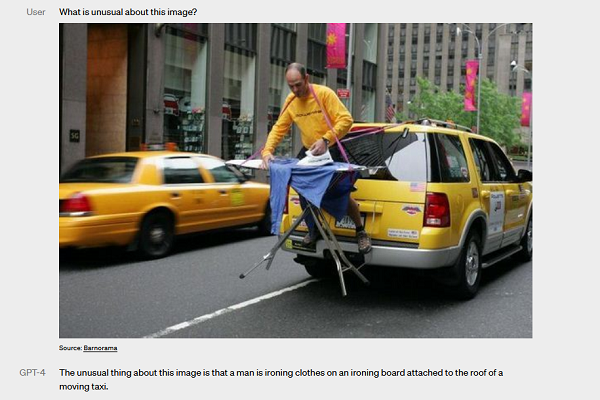

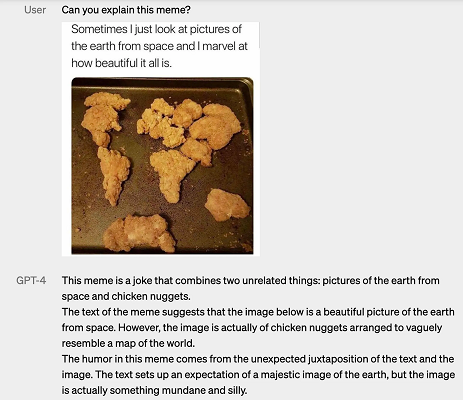

The new model also now allows for more customization within the API and, eventually ChatGPT so that users can set a standard response style, whether in a computer coding language or iambic pentameter. The visual input option means users can ask GPT-4 to explain or caption an image. It can even explain a joke, as seen in the example on the right. Currently, the visual component is limited to OpenAI’s partner, Be My Eyes, and its Virtual Volunteer feature.

“We look forward to GPT-4 becoming a valuable tool in improving people’s lives by powering many applications,” OpenAI wrote in its announcement. “There’s still a lot of work to do, and we look forward to improving this model through the collective efforts of the community building on top of, exploring, and contributing to the model.”

Follow @voicebotaiFollow @erichschwartz

OpenAI’s New Foundry Program Offers LLM Clients Dedicated Processing and Fine-Tune Controls