Kore.ai Brings Generative AI to Enterprise Virtual Assistants

Conversational AI startup Kore.ai has upgraded its platform for building customer service and other enterprise virtual assistants with the option to incorporate generative AI through OpenAI’s GPT-3 and other large language models (LLMs). Generative AI technology streamlines the creation of branded assistants, minimizing training time while enhancing their ability to engage with customers.

Generative Kore

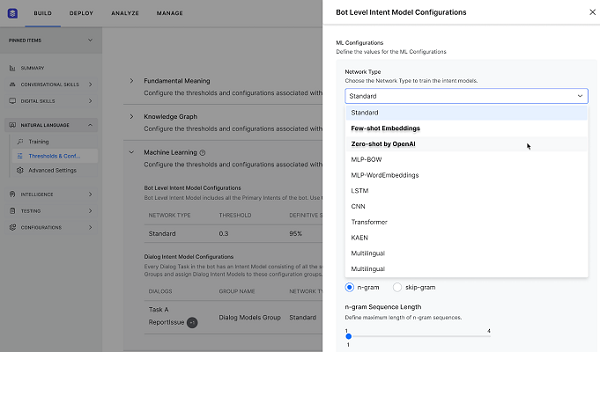

Kore’s virtual assistant platform helps companies build, test, and deploy voice and text-based customer service agents. The Kore.ai Experience Optimization (XO) Platform upgrade brings generative AI and other improvements to the table. Using generative AI models allows Kore to tailor the content to the customer’s needs and respond appropriately to changes in context. Kore users can apply GPT-3 or other models depending on their needs. LLMs cut out the necessity of supplying training data or writing questions and answers manually. The AI handles all of the conversational aspects of the interaction, including contextual content. It simplifies and speeds up the setup and rollout of a virtual assistant for a business with the quality of ChatGPT or other conversational generative AI products.

“It’s not just about the quality of responses; it’s also about privacy concerns around customer data. You don’t want to send that information to the cloud, even if it’s hosted on Azure,” Kore.ai CTO Prasanna Kumar Arikala told Voicebot in an interview. “A second element is [intellectual property] data. Customers don’t want their data to be used to train [AI] for other companies. For that reason, we built a hybrid model that has the capabilities of LLMs comparable to OpenAI but customized to our customers. Even with no training, they can build a bot that has 95%+ accuracy.”

Kore Growth

Kore’s latest platform update fits with the company’s expanding range of features. Last year, Kore introduced a new healthcare-focused service called HealthAssist with HIPAA-compliant interactions for healthcare providers and insurance groups. That followed a $3.5 million investment from Nvidia as part of a deal to add Nvidia’s Riva synthetic voice generator to Kore, a small fraction of the $70 million Kore raised in late 2021. The new version of Kore’s platform, including generative AI, expands on those previous updates and enables Kore to offer its services to many more companies that might not have had the staff to handle setting up and managing a virtual assistant system previously.

“One of the biggest advantages of an LLM is [that it can] generate an answer based on the context of the question from a large text. So for enterprise, previously, if they wanted to enable knowledge from different documents for a virtual assistant, they had to enter those documents and extract the answers and then train [the AI on] the questions,” Arikala said. “Now, you can just upload any document, even just point to a content management system. What happens is, with our LLM, [the AI] can actually understand user input, then respond not just with a part of a page of a document but with a specific answer generated with AI. And it’s not just a one-time connection. You can synchronize the data to stay up to date.”

Follow @voicebotaiFollow @erichschwartz

Kore.ai Extends Enterprise Conversational AI Service to Healthcare

Nvidia and Kore.ai Partner on Funding and Customer Service Voice AI