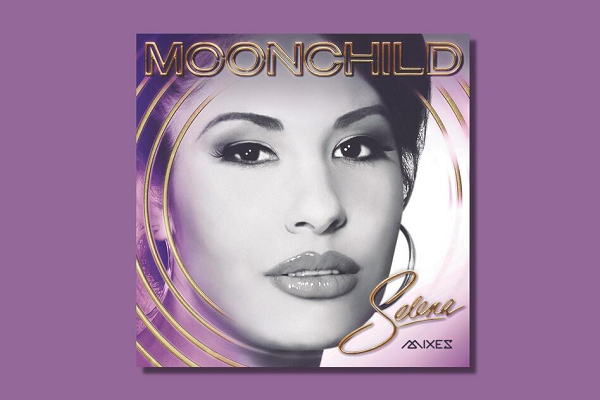

New Selena Album Synthetically Ages Deceased Singer’s Voice

Selena Quintanilla passed away 27 years ago, but a new album of remixed songs has synthetically aged her voice to how it might have sounded were she alive today. The new “Moonchild Mixes” album’s 13 tracks incorporate the digitally adjusted voice, which straddles the line between editing and pure synthetic media even as the technology becomes more familiar and commonplace in media.

Selena Now

To create the ‘modern’ sound of Selena’s voice, Q Productions, the label run by Selena’s family, pulled out her vocal tracks from the original recording and applied digital tools to evoke the same songs sung by a woman nearly four decades older than the teenager who first recorded them. Unlike AI-managed synthesized voices, however, the producers made the adjustments manually, deepening her voice by a semitone and adding body to the sound overall to mimic how voices change over time. It’s more sophisticated than a quick use of Autotune but still counts as more of a special effect than a whole new voice model. Adjusting the age of a performer’s voice has started to become more common, especially in cases where new stories are told about properties from decades ago. Star Wars TV shows have included de-aged versions of the voices of both Darth Vader and Luke Skywalker, thanks to voice cloning startup Respeecher. The same kind of technology can produce voice clones of those who have passed away that sound as they did in their prime, as synthetic speech startup Resemble AI did for Netflix’s recent Andy Warhol documentary. As these uses multiply, defining the terms and boundaries of synthetic media will become more important. Selena may have a full voice clone someday, but her voice on the new album is more of a remaster than a complete reproduction.

“For me, this is an extreme form of audio editing — similar to what is commonly used in music mixing and editing. You’ve probably seen similar stuff in videos, for example, how The Wire was remastered in HD with a 16:9 aspect ratio,” Resemble AI CEO Zohaib Ahmed explained to Voicebot. “However, the limitation to this approach is that you can only shift the pitch and other high-level features to a certain degree before the audio becomes distorted. Moreover, you definitely don’t have the freedom to tweak the performance from the original recording. Neural synthetic speech methods are capable of performing tasks like these at a much faster pace, with far more flexibility.”

Follow @voicebotai Follow @erichschwartz

Darth Vader’s Voice in Obi-Wan Kenobi TV Show ‘Assisted’ by Synthetic Speech Startup Respeecher

AI-Powered Andy Warhol Voice Reads His Diary in New Netflix Documentary

Young Luke Skywalker’s Voice Synthesized From Old Recordings for Mandalorian Cameo