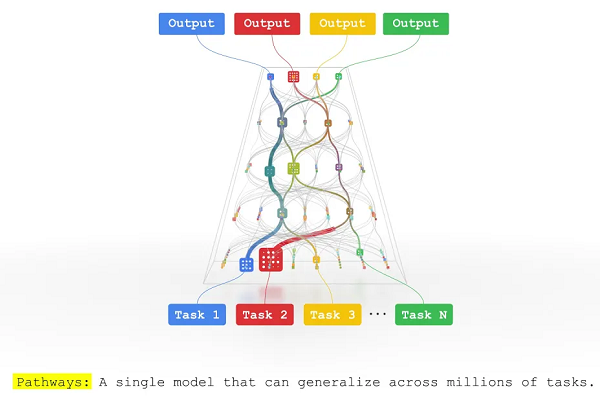

Google Debuts Multitasking AI Model ‘Pathways’

Google has unveiled a new model for artificial intelligence capable of completing a far broader array of tasks than the singularly focused designs in use. The Pathways AI model envisions stitching together the specialized systems currently used into a multimodal generalist. The flexibility might enable the AI to perform like a human brain, with the benefits and drawbacks of a neural network that implies.

Google has unveiled a new model for artificial intelligence capable of completing a far broader array of tasks than the singularly focused designs in use. The Pathways AI model envisions stitching together the specialized systems currently used into a multimodal generalist. The flexibility might enable the AI to perform like a human brain, with the benefits and drawbacks of a neural network that implies.

Neural Google

The standard AI model is trained in one way to perform one task. Blending enough of these algorithms together produces AI engines for voice assistants and other software that appear monolithic to users who don’t see the complex weave within. Google claims Pathways can condense all of those single-minded algorithms into a versatile neural network that can multitask its duties and how it learns. That’s a stark difference from starting at square one for each new feature taught to an AI as though it has forgotten everything and requires a lot of extra time and data from the engineers.

“That’s more or less how we train most machine learning models today. Rather than extending existing models to learn new tasks, we train each new model from nothing to do one thing and one thing only (or we sometimes specialize a general model to a specific task). The result is that we end up developing thousands of models for thousands of individual tasks,” Google Research senior vice president Jeff Dean explained in a blog post about Pathways. “Instead, we’d like to train one model that can not only handle many separate tasks, but also draw upon and combine its existing skills to learn new tasks faster and more effectively.”

An AI built on the Pathways model would keep previous training in its neural network, leveraging it for use in the future. The AI could apply its knowledge from existing skills to learning new skills, weaving together the points where disparate concepts share similarities. Dean compared the contextual recall to how mammalian brains work. He suggested that Pathways An AI taught to use aerial photos for estimate elevation could extend that knowledge to predicting how a flood might travel through a mountain valley without having to specifically train a new algorithm as would be the standard method today.

Sensible AI

To build on the flexible responses from models using Pathway AIreport, Google is applying a similar principle to make Pathways multimodal in real life when collecting data. Today, a typical neural network can process either text, audio or video but not all three. Google sees Pathways as evolved enough to collate all three kinds of data and understand how they interact. All three power the decision-making within the Pathways neural network. The data gathering can translate from one format to another, so Pathways could be used on its own or to augment existing systems that struggle with enough data collection without opening up to new modes of communication.

“People rely on multiple senses to perceive the world. That’s very different from how contemporary AI systems digest information. Most of today’s models process just one modality of information at a time. They can take in text, or images or speech — but typically not all three at once,” Dean said. “That’s why we’re building Pathways,” Dean said. “Pathways will enable a single AI system to generalize across thousands or millions of tasks, to understand different types of data, and to do so with remarkable efficiency – advancing us from the era of single-purpose models that merely recognize patterns to one in which more general-purpose intelligent systems reflect a deeper understanding of our world and can adapt to new needs.”

Follow @voicebotai Follow @erichschwartz

Amazon is Retiring Alexa Display Templates for Multimodal Responsive Templates

Microsoft and Nvidia Unveil Enormous Language Model With 530B Parameters

Google’s Translatotron 2 Improves Linguistic Shifts Without the Deepfake Potential