Amazon Unleashes Over 50 New and Improved Alexa Developer Tools at Alexa Live

Amazon’s annual Alexa Live event showcased dozens of new and improved features for the voice assistant centered around developers and their creations. The day-long series of presentations and announcements hit on a broad range of themes. Still, the upgraded developer tools and discovery features deserve some detailing, with the new shopping and monetization options detailed in a separate post.

Amazon’s annual Alexa Live event showcased dozens of new and improved features for the voice assistant centered around developers and their creations. The day-long series of presentations and announcements hit on a broad range of themes. Still, the upgraded developer tools and discovery features deserve some detailing, with the new shopping and monetization options detailed in a separate post.

Developing Alexa

Amazon referred to this year’s Alexa Live as the single largest release of new developer tools ever, citing more than 50 of them altogether. The company revealed just 31 new features from Alexa Live last year. There are also far more people working on Alexa skills, with the company claiming to have more than 900,000 Alexa developers registered more than 100,000 new developers just in the last year.

“Developers and device makers have been core to Alexa’s evolution and they will be critical to the future of our entire family of devices and services,” Alexa chief technology evangelist Jeff Blankenburg wrote in a blog post about Alexa Live and the new features. “And it’s exciting to reaffirm our commitment to the Alexa builder community and share the latest product advancements and insights on the future of voice.”

One of the most significant advances at Alexa Live in 2020 was the deep learning tool Alexa Conversations. This year, Amazon widened access to Alexa Conversations to everywhere with English-speaking Alexa and early tests in German and Japanese. To make voice app development even more accessible, Amazon debuted the new Alexa Skill Components feature in beta. The Alexa Skill Components streamline building skills by connecting standard elements of voice apps into the developer’s models and databases without needing to code the basics repeatedly. The Alexa Entities feature Amazon has now made widely available performs a similar service for information. The tool allows third-party skills to pull from Alexa’s general knowledge about the world instead of requiring each developer to duplicate whatever part of the encyclopedia they feel necessary.

The Alexa Skill Design Guide is getting a refresh. Get insight into voice-first design methodology from the #SkillBuilder community that covers the full development lifecycle. pic.twitter.com/PKYmFH0Oia

— Alexa Developers (@alexadevs) July 21, 2021

Amazon has tools to make Alexa more accommodating to any specialized or unique words needed for third-party Alexa skills too. The new Customized Pronunciations feature lets developers directly teach Alexa the names, titles, or scientific jargon within their voice app so that the voice assistant will say them correctly and recognize when a user says them as well. Amazon has set up the new Skill A/B Testing Service for developers to try out any new or updated Alexa skill they want to release, with the data aiding the scheduling of the skill’s release and plans for future updates. All of the new and improved features are enclosed in the new edition of the Alexa Skill Design Guide Amazon announced at the event.

Discovering Voices

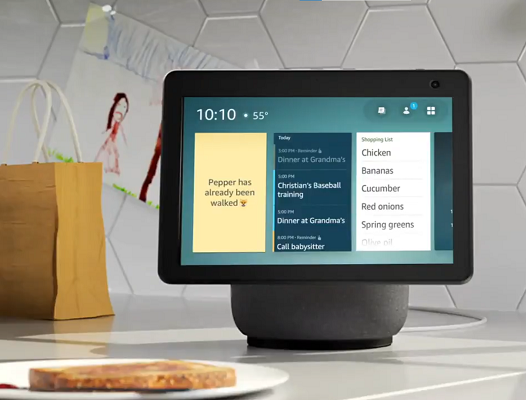

Developers can augment the discovery and interaction with their Alexa apps with several of the new features, especially those whose creations use screens or multimodal elements. That’s where the new APL Widgets come into play.  Designed for smart displays and other Alexa devices with screens, widgets let users jump to new content or other elements of a skill the developer wants to feature by tapping on an icon on the screen. The screen can also highlight useful Alexa skills the user might not be aware of through the new Featured Skill Cards, which promote specific voice apps on the screen as it rotates through various images when idle. For now, interested brands and developers have to apply to Amazon directly to be included.

Designed for smart displays and other Alexa devices with screens, widgets let users jump to new content or other elements of a skill the developer wants to feature by tapping on an icon on the screen. The screen can also highlight useful Alexa skills the user might not be aware of through the new Featured Skill Cards, which promote specific voice apps on the screen as it rotates through various images when idle. For now, interested brands and developers have to apply to Amazon directly to be included.

Amazon also widened and improved existing features, like the name-free interaction (NFI) toolkit introduced last year. NFI allows Alexa users to use keywords rather than specific skill names, with the voice assistant then inferring what skill it should access. The success of NFI led Amazon to augment it with developers able to apply to have their skill suggested when Alexa users ask for generic services like exercise, a game, or a story. The voice assistant can also make personalized suggestions for Alexa skills the user might like, or extend the answer to a question with a suggestion for a voice app the user has never mentioned before, like a cooking app if they ask a question about a kind of food. All of these new discovery features are still undergoing testing, and developers have to apply to be part of the program, though Amazon plans to make them a standard part of Alexa skill promotion.

“Our vision for Alexa is to be an ambient assistant that is proactive, personal, and predictable, everywhere customers want her to be. This enables them to do more and think about technology less. It’s our long-term vision, which means there’s a lot of work to be done to make this a reality,” Blankenburg explained. “These tools and features will make it easier to drive discovery, growth, and engagement, unlock more ways to delight customers, and address a few key focus areas.”

Follow @voicebotai Follow @erichschwartz

Amazon Lays Out Alexa’s Future, Adds 31 New Features for Voice App Developers at Alexa Live

Alexa’s New Skill Resumption and Name-Free Interaction Simulate Human Memory and Inference Ability