WeWalk Smart Cane Guides Visually Impaired During Pandemic With Voice Assistant and Ultrasonics

Canes have enabled people with impaired vision to travel safely on their own for a very long time. British tech startup WeWalk upgrades that ancient tool with a voice assistant built into its eponymous ‘smart cane’ as part of a suite of high-tech tools for navigating safely. WeWalk, which recently joined Microsoft’s AI for Accessibility program, highlights how voice AI can be applied to improving accessibility, especially as the COVID-19 pandemic has disrupted the lives of so many people with disabilities.

Canes have enabled people with impaired vision to travel safely on their own for a very long time. British tech startup WeWalk upgrades that ancient tool with a voice assistant built into its eponymous ‘smart cane’ as part of a suite of high-tech tools for navigating safely. WeWalk, which recently joined Microsoft’s AI for Accessibility program, highlights how voice AI can be applied to improving accessibility, especially as the COVID-19 pandemic has disrupted the lives of so many people with disabilities.

Smart Cane

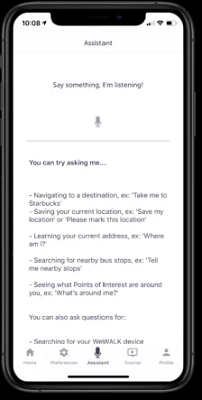

The $600 WeWalk cane looks like a standard white cane, except for the handle, which includes a touchpad, speaker, and sensory tools to help the user get around. Ultrasonics probe for waist-high obstacles the tip of the cane might miss and vibrate the cane when the echo notes a potential walking hazard. The cane pairs with a smartphone app to run a voice assistant that users can activate with the touchpad. The voice assistant can answer questions about where the user is, nearby public transport spots, identify nearby buildings and landmarks, book an Uber, and provide real-time walking directions to a specified locale in a way more useful to those who can’t see than the standard turn-by-turn directions. The voice assistant in the cane is versatile enough to accomplish any task of that nature.

The $600 WeWalk cane looks like a standard white cane, except for the handle, which includes a touchpad, speaker, and sensory tools to help the user get around. Ultrasonics probe for waist-high obstacles the tip of the cane might miss and vibrate the cane when the echo notes a potential walking hazard. The cane pairs with a smartphone app to run a voice assistant that users can activate with the touchpad. The voice assistant can answer questions about where the user is, nearby public transport spots, identify nearby buildings and landmarks, book an Uber, and provide real-time walking directions to a specified locale in a way more useful to those who can’t see than the standard turn-by-turn directions. The voice assistant in the cane is versatile enough to accomplish any task of that nature.

“Before WeWalk, you needed several apps for navigation, maps, public transport, and exploration,” WeWalk head of research and development Jean Marc Feghali told Voicebot in an interview. “We crammed them all into one app, controlled by the cane. The voice assistant is then connected by a microphone built into the cane.”

WeWalk began as a successful crowdfunding campaign in 2018. The first versions of the smart cane launched in 2019, but they can connect to the internet and take firmware and software updates to give them a longer life. The voice assistant is still in beta, with a fully-approved version to come, including in new languages, in the future and transmitted to all of the purchased canes. WeWalk is about halfway through Microsoft’s AI for Accessibility program as well and incorporates Azure AI for speech recognition and related features. Feghali has Leber’s Congenital Amaurosis, which makes him essentially blind at night. He’s one of several members of the WeWalk team with visual impairments of some kind. Feghali attributed some of WeWalk’s success to the contributions of people with limited eyesight to a project aimed squarely at them., as opposed to other accessibility-related technology.

“The issue is that [tech] is designed for sighted people and then uses voice to kind of compensate, which is ok, but not enough,” Feghali said. “We have to build from the ground up, and it’s the same case with voice assistants. It shouldn’t be compensating for not having sight; it should be built for those without sight. So, we moved from using other voice assistants to making our own with Microsoft’s range of APIs.”

AI Accessibility

Accessibility tech tools are being produced at a rapid clip. They may range from powerful, unique platforms to small alterations in very commonly used services. The pandemic has boosted interest in the concept of voice AI for accessibility too. The Alexa Care Hub feature leverages the voice assistant as a kind of remote caretaker of older relatives living alone, while social media app TikTok started adding text-to-speech tools for all those with limited vision who can’t see well enough to read words in the posted videos.

“There’s definitely much more of an eye on the field since the pandemic started,” Feghali said. “People can’t interact in person, so [AI] can be an aide.”

The experiments can be quite creative too, as with Google’s prototype device designed to help visually impaired people go on runs safely by using an Android smartphone app to track painted lines on the ground and different sounds played in earbuds them what direction and how far they are away from their path. Though successful, it may be some time before it becomes widely usable by the community, although the potential hazards may make some reluctant to try it out.

“Using AI for assisted technology is very different from AI emojis or virtual reality because it’s used on a daily basis by people who can’t afford to have it mess up,” Feghali said. “Designing accessible tech is like designing buses. You want it to fade into the background. It has to be reliable; you can’t be as experimental as you might like.”

The visually impaired can find plenty of less hazardous tools through Alexa and Google Assistant, however. The Show and Tell feature for Echo smart displays and the Lookout feature for Android devices both identify labels on food containers by AI, while Google Maps can use voice cues to guide people with limited sight. The ubiquity of canes among those with impaired vision could make WeWalk extra enticing, even at $600, because it’s something so many potential customers understand deeply.

“The idea behind WeWalk is not about changing the basis of canes, which are such a crucial tool and symbol of independence,” Feghali said. “But it’s a device from the stone age and can do so much more.”

Follow @voicebotai Follow @erichschwartz

Voiceitt Debuts Direct Alexa Control for People with Speech Disabilities

Google Pilots AI-Powered Audio Navigation Tool to Help Visually Impaired People Run Safely