New ToxMod AI Tool Monitors Game Chat for Toxic Speech

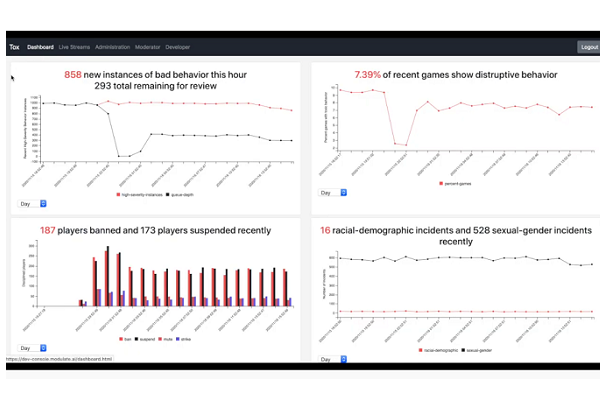

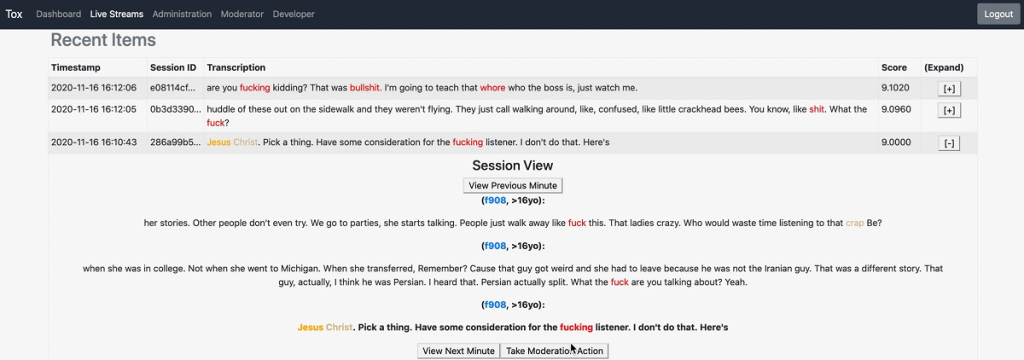

Voice technology startup Modulate has created a new artificial intelligence tool called ToxMod that can detect and flag problematic and toxic speech in real-time video game voice chat. ToxMod detects and alerts moderators when it hears racist slurs, threats, or anything else the developers want to know. The AI uses context and emotional indicators to distinguish when something said actually rises to the level of a problem.

AI Detox

Voice chat during video games is a great way to enhance a game, but stories about egregiously bad behavior can make players, especially parents of younger players, leery of using it. ToxMod uses AI as an aid to human moderators stretched thin trying to spot potential issues, determine if it is a real problem, and respond accordingly. Modulate leveraged the AI developed for customized “voice skins” players can use to alter how they sound during game chats to make ToxMod. The result of their efforts essentially offers advanced real-time transcription and evaluation of spoken language, but with a setting and purpose that differs from the conference call AI services that have grown in popularity of late. And it arrives at a moment when the world of gaming is looking for ways to be welcoming to more customers for both altruistic and obvious business purposes.

“There have been a lot of technical innovations that have finally enabled this sort of technology,” Modulate CEO and co-founder Mike Pappas said in an interview with Voicebot. “And there’s been a substantial increase in interest because of a shift in culture.”

“There have been a lot of technical innovations that have finally enabled this sort of technology,” Modulate CEO and co-founder Mike Pappas said in an interview with Voicebot. “And there’s been a substantial increase in interest because of a shift in culture.”

“Transcription technology has gotten really good over the last couple of years,” added CTO and fellow co-founder Carter Huffman. “It’s not always cost-effective at a huge scale, though. There’s a big difference between 1,000 players at once versus trying to moderate millions of players.”

Game On

ToxMod’s algorithms are extremely flexible, which means the AI can be used on a wide range of game types without being overly permissive or too broad in what it alerts moderators to in the chat. When it does spot an issue, moderators can decide what to do about the person who said it. The AI will improve over time, learn from how moderators respond, and theoretically be set to automatically decide independently to send warnings or even ejecting a particularly terribly behaved player. Defining where that line is can be tricky. That’s why ToxMod was trained to grasp not only what is being said but how it’s being used and the context for the language to determine if there’s a problem. What’s disruptive in one setting may be entirely appropriate enthusiasm in another.

“We have default settings that generally make sense, but we can customize specific levers,” Pappas said. “In a violent game like Call of Duty, there may be less concern about any violent talk as opposed to a more child-friendly game, which may be prioritizing violent speech as a problem.”

“[The model] understands nuance and if someone being aggressive or defensive,” Huffman said. “Nuance and understanding terminology for that language and game, that’s where our technical innovation comes in.”

Pappas and Huffman wouldn’t say what games or platforms will be using ToxMod in the near future but did confirm it will operate on console and PC games and could come to mobile devices. Modulate has raised $6 million from investors, including $4 million earlier this year, in a round led by Crush Capital. Connecting AI to gaming is becoming more popular in other ways as well, with platforms themselves keen to experiment with voice assistants and AI. There are also plenty of smaller firms jumping in, with HCS selling voice controls in Star Wars: Squadrons, and Fridai, part of the Microsoft for Startups accelerator, create video game-specific voice assistants. Making sure people are comfortable with the actual experience is crucial to the future of gaming, though, and ToxMod could help clean up.

Follow @voicebotai Follow @erichschwartz

Star Wars: Squadrons Game Adds Voice Command Mod for AI Co-Pilots

New Alexa Pac-Man Game Uses Voice Commands in ‘Wakanese’ to Get Pellets and Avoid Ghosts