Google Tests AI-Powered ‘Look to Speak’ Tech on Android

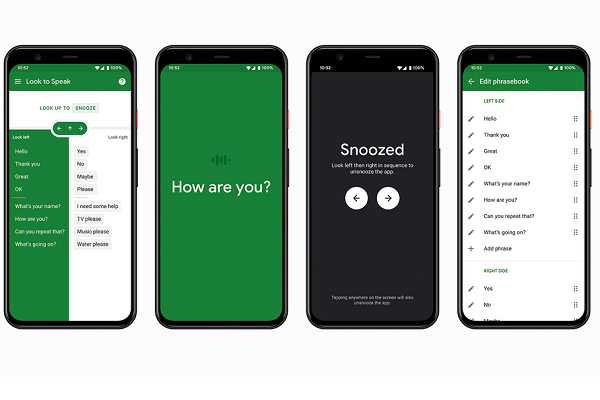

Google has debuted the Look to Speak accessibility app for Android devices, enabling people with motor and speech impairment to pick out custom phrases on the screen with their eyes and have it said out loud. The new app leverages artificial intelligence to track where people are looking and marks the latest in a spate of AI-supported accessibility features from Google, especially for Android

Google has debuted the Look to Speak accessibility app for Android devices, enabling people with motor and speech impairment to pick out custom phrases on the screen with their eyes and have it said out loud. The new app leverages artificial intelligence to track where people are looking and marks the latest in a spate of AI-supported accessibility features from Google, especially for Android

Gaze Speech

Look to Speak broadly functions like several existing machines that use cameras to track where eyes focus and select the letter or word in the user’s gaze. The most famous example is probably the one used by the late Sir Stephen Hawking. Traditionally this has required specialized and sometimes bulky equipment like Hawking’s cutting-edge wheelchair, to accurately transcribe where someone is looking into typing out words that can be run through a text-to-speech program. Look to Speak condenses all of that into an Android device, providing pre-written phrases that users can look at, then speaking the chosen phrases out loud. The actual lines can be customized to say anything the user wants either by their own choice or with the help of someone else. Then, it just takes flicking their eyes in different directions, with adjustable sensitivity, to pick which phrase on the screen will be read out. In its ideal form, people with limited speaking and motor control could regain their voice.

“Perhaps my favorite feature is the ability to personalize the words and phrases—it lets people share their authentic voice,” speech and language therapist Richard Cave, who worked with Google on testing Look to Speak, wrote in a blog post about the new feature. “What was amazing to see was how Look To Speak could work where other communication devices couldn’t easily go—for example, in outdoors, in transit, in the shower and in urgent situations. Now conversations can more easily happen where before there might have been silence, and I’m excited to hear some of them.”

Experiment Accessible

Look to Speak was born from Experiments with Google, but is just one of the rapidly expanding menu of accessibility features Google has added introduced, with the pace accelerating in the last year or so. Another experiment, Project Guideline, is developing a way for blind and visually impaired people to go on runs safely by using an Android smartphone app. The most significant accessibility feature added is arguably Android Action Blocks, which was released universally in May after months of experimenting. Android devices introduced Sound Notifications, which alerts people who can’t hear critical noises like alarms or crying babies. Android devices also added reading food labels to the Lookout feature, while Google Maps now offers voice cues for Google Maps to guide people with limited sight. There are also more long-term efforts like Project Euphonia, which works to train voice assistants to understand those with speech impairments,

Along with the new features, the tech giant has been broadening the availability of existing tools. For instance, the Android 11 Voice Access feature became usable on devices going back to Android 6.0. As for Look to Speak’s purpose, it complements the eye-tracking controls for Google Assistant that debuted in the spring. Experimental or not, the fact a tech giant like Google is investing the resources to make them a reality suggests they will be part of the broader tech accessibility space in the future. The company even works with celebrities like Paralympian Garrison Redd to simultaneously come up with new ideas and bring the existing features to the community of people with disabilities.

Follow @voicebotai Follow @erichschwartz

Google Rolls Out Action Blocks and Other New Android Accessibility Features

Google Makes Android Voice Access Feature Compatible With Older Devices

Paralympian Garrison Redd Will Discuss Voice Tech Accessibility in Latest Voice Talks Episode