Google App Actions for Android Now Available to All Developers and Bring Google Assistant Strategy into Sharper Focus

The focus of yesterday’s Google Assistant Developer Day was decidedly not smart speakers. While smart displays were referenced several times and there are new features for account linking and monetization, the big focus was Google Assistant on the smartphone. “We introduced Google Assistant back in 2016 with the mission of helping people get things done more easily … Along our journey, we’ve heard from users that they want more than a voice-only digital assistant and hence we’ve expanded on this idea to envision an ambient digital assistant that moves with you throughout the day across devices, understanding context and intent, ready to help whenever you need it,” said Danny Bernstein, managing director, partnerships for Google Assistant, in a pre-recorded video segment.

Enabling Google Assistant to Enhance the Mobile App Experience

That focus on a “digital assistant that moves without you throughout the day,” leads directly to using Google Assistant more on the smartphone. The first new features introduced for developers were App Actions which are now available to all developers. App Actions debuted at Google I/O in 2019 and have been available on a limited basis. Google first created 20 built-in intents for four industry verticals including finance, transportation, food and drink, and health and fitness. Today, the company announced an expansion to 10 industry verticals and 60 built-in intents. The new industry verticals are communications, games, productivity, shopping, social, and travel.

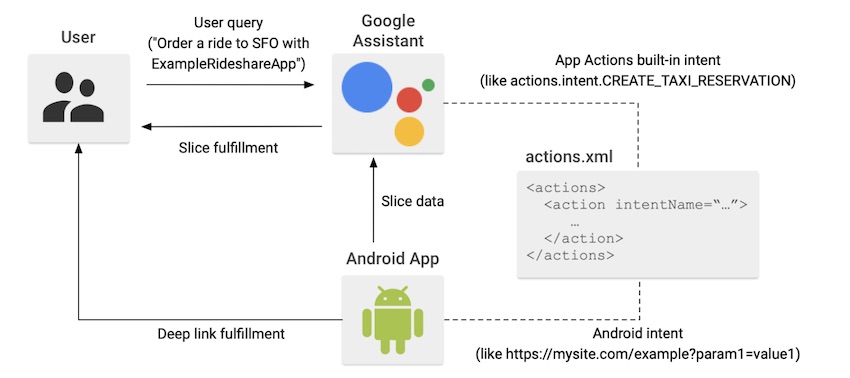

Importantly, Google also announced custom intents with deep-linking capabilities for App Actions. Google positions all of the built-in intents as extending an “Android app’s functionality to Google Assistant.” According to newly updated Google Assistant documentation, developers will be able to:

- Deep link into app functionality from Assistant. Connect your existing deep links to user queries matching predefined patterns.

- Display information from your app directly in Assistant. Provide users with inline answers and simple confirmations without changing context.

“From a user’s perspective, App Actions behave like shortcuts to parts of your Android app. When a user invokes an App Action, Assistant matches their request to a registered built-in intent and its corresponding fulfillment.” This is all pretty straightforward. Users can employ Google Assistant to not only open a mobile app but either navigate to a specific area or even execute a task. That is the app-centric approach. Google also allows developers to enable information from the app to be shared back with Google Assistant and displayed (and presumably read out as text-to-speech) in a Google Assistant conversation.

There may be some Android developers that are wary of the approach of passing fulfillment to Google Assistant. That effectively disintermediates their relationship with the user. The Google Action developer community is regularly expressing concern about Google’s first party fulfillment crowding out the opportunity for third parties to succeed. Whether or not this critique is justified in all cases, shifting a potential user session in your app where you have full ownership of the user relationship to Google Assistant will not be embraced by everyone.

The most common use cases of App Actions to start will be the search in apps intent (GET_THING) and open app pages intent (OPEN_APP_FEATURE). Both are deep-linking approaches to a service or content respectively and are listed in the Common built-in intents category. This is a positive development for Android app publishers. It can reduce some of the friction users face in locating an app, opening it, and then navigating to the feature they want. Google Assistant can help fulfill that intent far more efficiently in most cases.

Bringing More Value to Voice on Mobile

Voice assistant use on smartphones has historically been limited to a few tasks. Initiating a phone call, creating and sending a text message, starting a navigation session or search query are all common. After these, the use case adoption frequency drops off precipitously. However, an increasing use case is employing Google Assistant on Android smartphones (or Siri on iOS) to launch an app. That has some marginal benefit in terms of efficiency in many instances. However, mobile apps are simply packaged digital services. It is suboptimal to enable the use of voice to open an app but then shift users back to touch and swipe to take the next step. Enabling the deep-linking to apps, and access to those packaged digital services, should increase the benefit significantly for users and result in the expanded use of Google Assistant for everyday tasks on mobile.

That is good for Google, for users, and presumably third-party app publishers too. However, App Actions today are for single-shot requests. Once Google Assistant gets you into the app, you will generally be back to the manual navigation provided in the app experience. The Twitter example from the Google Developer Day was the most interesting because the continuous conversation feature enabled the Google Assistant session to remain open and for the user to execute multiple in-app searches in sequence without using the wake word. However, once that session ends, there is no voice control in Twitter by Google Assistant from what I can tell.

What will be interesting is if the increased use of Google Assistant to launch and execute deep-link access into apps increases consumer expectations for voice features. This may lead to mobile app providers to add at least voice navigation within their apps to accommodate user interest in having more options for using the apps. From a UX, perspective this will also be interesting as it likely will require a shift by the user from Google Assistant to launch and then another voice service (or potentially a voice assistant) when they land in the app.

Regardless, Google clearly recognized that it was time for its large Android app user base to finally get more explicit benefit from Google Assistant. You should expect to see far more focus on voice and smartphones in the coming year and that will surely shift consumer expectations more toward voice.

Follow @bretkinsella Follow @voicebotai

New Google Assistant Developer Tools Promise Better Discovery and Smart Display Visuals

Google Assistant Goes Incognito With Upcoming Guest Mode Privacy Feature