What’s Next for Stanford University Open-Source Virtual Assistant Almond: An Interview with Principal Developer Giovanni Campagna

This interview originally appeared on Medium by Giorgio Robino and has been edited for length and clarity.

This interview originally appeared on Medium by Giorgio Robino and has been edited for length and clarity.

I learned of Almond, the Stanford University open virtual assistant, for the first time one year ago reading an article on voicebot.ai. I was immediately enthusiastic about the core concepts on which the project is based: the user’s data privacy, the need of a web-based on linguistic user interfaces, the distributed computers architecture, the natural language programming approach, and many others topics related to a possible next generation of the web, populated by a federation of humans and theirs personal assistants.

Soon I discovered that one of the principal developers of Almond software is Giovanni Campagna, a Ph.D. student in the Computer Science Department at Stanford University and a member of the Stanford Open Virtual Assistant Lab (OVAL) who works with Professor Monica Lam. Hence the idea of my interview with him, about the past and the future of Almond and personal virtual assistants.

Introduction to Almond

Giorgio: Ciao Giovanni! In order to sketch out what it is and what it will be Almond, may you briefly summarize the project history? The oldest public presentation I remember was by Monica Lam in fall 2018. May you give us the basic concepts of the project and explain what is the role of Almond within OVAL laboratory research?

Giovanni: The Almond project started in Spring of 2015 as a class project to explore a new, distributed approach to the popular IFTTT service. At the time, it was called Sabrina. Soon after, we realized the need for both a formal language to specify the capability of the assistants, and a natural language parser to go along with it, so end users could access those capabilities. The first publication for Almond, and the first reference under its current name, came in April 2017 at the WWW conference, where we described the architecture: the Almond assistant, the Thingpedia repository of knowledge, and the ThingTalk programming language connecting everything together.

Since then, we have been working on natural language understanding, focusing in particular with the problem of cost. State of the art NLU, used by Alexa and Google Assistant, requires a lot of annotated data, and is very expensive. We made two recent advancements: first, in PLDI 2019 we showed that using synthesized data can greatly reduce the cost of building the assistant for event-driven, IFTTT-style commands. Later, in CIKM this year my colleague showed how to build a Q&A agent that can understand complex questions over common domains (restaurants, hotels, people, music, movies, and books) with high accuracy at low cost.

In ACL this year, I presented how the same synthesized data approach can be used to build a multi-turn conversational interface, achieving a state of the art zero-shot (no human-annotated training data) accuracy on the challenging MultiWOZ benchmark. Most recently, we have received a grant from the Alfred P. Sloan Foundation to build a truly usable virtual assistant (not just a research prototype), and we hope to release it in 2021.

Giorgio: What made you start developing Almond? Did you initially work on Almond as part of your Ph.D. thesis? Are you the leader of the software project development? And how is the team composed? Besides the Almond project, What is your current role in the OVAL team, and what are your long-term research interests?

Giovanni: I started developing Almond as research on the ThingTalk programming language: designing a programming language that can enrich the power of the assistant beyond simple commands, and give the power of programming to everyone. Parallel to ThingTalk, I worked on converting natural language to code, because natural language is the medium of choice to make programming accessible. Our research found that neural networks are extremely effective for natural language programming tasks, as long as training data is available. ThingTalk is still the core of my Ph.D. thesis, but over time we moved our focus to reduce the cost of training data acquisition.

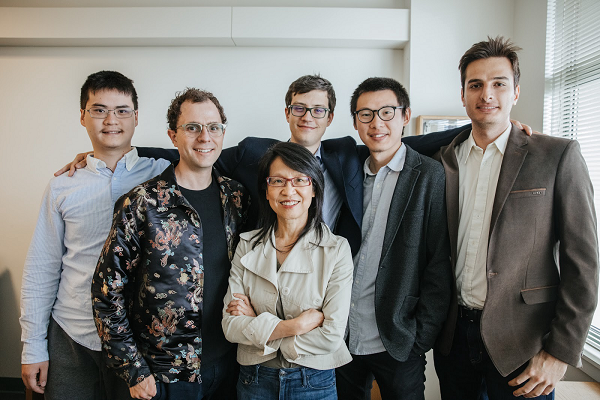

I built the original version of the Almond assistant, and a large chunk of the current code, so in a way, I am still the maintainer of it, but Almond would not have been possible without the help of my colleagues. These include Silei, Michael, Jackie, Mehrad, and Sina (all current Ph.D. students). Silei was the first to join after me and he’s the second-most active dev on Almond. His research is mainly on Q&A over structured data. Michael recently defended his thesis; he was working on multi-modality: bridging GUIs and natural language. Jackie also worked on multi-modality, with a paper in UIST on mobile app interactions using natural language. Mehrad is working on multilingualism leveraging machine translation technology, as well as named entity recognition. Sina is working on Q&A over free text, paraphrasing, and error correction. Additionally, we have a number of MS and undergraduate students who have helped on various projects.

Data Privacy

Giorgio: Data privacy is probably a foundation concept that gave birth to the Almond project. May you deepen why privacy is so important for all of us, citizens and companies? How is all this related to democracy and people’s freedom on the web? One problem I see in current big player personal assistants is the fact that people’s data (voice conversations, also containing background ambient audio, e.g. at home) are processed by cloud systems proprietary platforms. In the best scenario, all this data is used to improve “AI-blackbox” cloud-based proprietary services, feeding machine learning algorithms. In the worst case, conspiracy theorists do suppose a malicious use by such companies that would steal personal end-user data populating people’s knowledge bases for any further commercial usage. Do you consider this last scenario an actual concern?

Giovanni: This is not an easy question, and let me preface by saying this is my personal opinion, not the project’s. I think privacy is inextricable from freedom: I am not truly free if everything I do is tracked, logged, and stored forever by a company or government. I am not truly free if I can be judged in the future for anything I’ve done at any point in my life. One closes the blinds to be free to do whatever in the privacy of their home. And because so much of our lives are now conducted over the Internet, it’s clear that Internet privacy overlaps significantly with real-life privacy.

Now, as you point out, virtual assistants and conversational AIs in general pose unique challenges to the privacy problem. First, state of the art natural language requires a lot of user data for training, which means the virtual assistant providers are continuously collecting all the conversations performed on the assistant, and have contractors continuously listening and annotating the data. To have somebody listen to my conversations, that’s not very private. Reducing the need for annotated real data has been a strong focus of our research, and we believe we’re finally getting there.

Second, and most importantly, virtual assistants inherently have access to all our data, through our accounts: banking, health, IoT, etc.. We want the virtual assistant to get access to our accounts because we want the help, and we want the convenience of natural language. But what guarantee do we have that a proprietary service won’t suck all the data and use it for marketing purposes? Why wouldn’t a proprietary assistant provider look at our banking information to promote a credit card or mortgage product? Why wouldn’t a proprietary assistant look at the configured IoT devices to promote similar or compatible products? And with Amazon and Google dominating the online retail and ad markets respectively, it would be surprising if they did not eventually start doing that.

Open-Source

Giorgio: Could you share your point of view about the importance of open-source software in general, such as the Linux operating system?

Giovanni: I have been an advocate of free software for a long time, and I am a strong believer in the four fundamental software freedoms as purely ethical principles. The freedom to study software is what allowed me to learn how to build software before I started college, and the freedom to modify and distribute software allows people to collaborate and build something bigger than any single individual could build.

I also believe that, unlike proprietary software, free software cannot abuse the trust of users. It is trivial to detect a free software app collecting more data that claims or doing anything shady with the data. It is trivial to fork the app and remove any privacy-invasive functionality. Hence, free software communities are very careful to gain the trust of users and protect their privacy. You can see it for example with Firefox: while Firefox collects data for telemetry, they’re very careful to allow people to disable the telemetry and do not collect more than they claim.

Linguistic Web

Giorgio: Regarding Almond’s core concepts, I have been impressed by the statement “We are witnessing the start of proprietary linguistic webs” that I found in these Almond presentation slides. Can you clarify what you mean by the Linguistic Web? Are proprietary linguistic webs an implicit reference to Google Assistant and Amazon Alexa voice-based / smart speaker-based virtual assistants?

Giovanni: What we’re referring to is the third-party skill platforms being walled gardens controlled top-down by the assistant providers. Any company that wishes to have a voice interface must submit to Alexa and Google Assistant. As proprietary systems, these can shutdown competing services or impose untenable fees.

We believe instead that every company should be able to build their own natural language interface, without depending on Amazon or Google. These natural language interfaces should be accessible to any assistant. One example of work in this direction is Schema2QA (to appear in CIKM 2020), a tool to build Q&A agents using the standard Schema.org markup. Any website can include the appropriate markup to build a custom Q&A skill for themselves, and furthermore the data is available to be aggregated across websites by the assistant.

Could you explain what Natural Language Programming is?

Giorgio: Which kind of personal assistant user experience does Almond provide to end-users?

Giovanni: First of all, I want to stress, Almond is still a research prototype. It is an experimental platform to test our ideas, both in NLP and HCI. We have received a grant from Sloan to turn the prototype into a truly usable product. As we do that, we imagine we will also focus on the most important skills: music, news, Q&A, weather, timers, etc. Yet, the technical foundation to support end-user programming will remain there, and we will try to support it going forward.

In terms of differentiating features, I imagine the key differentiator is really privacy, rather than UX. I imagine Almond will be supported on a traditional smart speaker interface because that’s the most common use case for a voice interface. I also personally like using Almond on the PC, where we have an opportunity on the free software OSes. I’ve given a talk at GUADEC (the GNOME conference) recently about potential opportunities there.

Natural Language

Giorgio: Do you have any news about how Almond could eventually evolve into a general-purpose personal “conversational AI”, able to sustain multi-turn conversations, not only in event-based task-completion contexts but maybe also in companion-like open-domains / chit-chat dialogs?

Giovanni: This is absolutely on our radar. First of all, we’re partnering with Chirpy Cardinal, another Stanford project who won second place in the Alexa Social Bot Challenge. In the near future, we will integrate Chirpy Cardinal into Almond for companionship and chit-chat capabilities. We also imagine that the assistant will learn the profile of the user, their preferences, and will have a memory of all transactions, inside and outside the agent. We do not have any released work on this yet.

About stateful and contextual dialog management

Giorgio: One topic I’m personally obsessed with is how to program multi-turn chatbot dialogues, in contextual (closed/open) domains. That’s a goal not yet achieved by Google and Amazon cloud-based assistants. To date, in fact, both the famous systems surprisingly do not maintain dialog context in multi-turn conversations even on a simple domain as weather forecasts. The lack of context is not just related to the conversational domain, but also to the “time.” Namely, the above-mentioned voice assistants are not able to remember pretty anything about a previous interaction with a specific user. In what directions do you think conversational technology will evolve?

Giovanni: I think state of the art assistants will grow conversational capabilities for task-oriented skills very quickly. Some, like Almond and Bixby, are built to support multi-turn from the start. Others, like Alexa, will require re-engineering for multi-turn, but they will get there very soon. See also Alexa Conversations as an emerging technology for multi-turn, multi-skill experiences. Incremental learning is a much more open-ended area. There is a large body of work in this space, starting with LIA, the “teachable” assistant from CMU. I also imagine the assistant will grow a profile of the user, both by data mining on the conversation history and by explicitly tracking a KB of the user’s information. In a sense, this is already available: the assistant knows my contacts and family relations, it knows my location, it knows my preferred music provider, etc. It will only grow over time as more features are added.

Giorgio: What is your opinion about any practical usage of “statistical web-crowd-sourcing” (my definition) in systems like Generative Pre-trained Transformer 3 (GPT-3), the auto-regressive language model that uses deep learning to produce human-like text?

Giovanni: Pretraining is at the core of the modern NLP pipeline, whether it’s masked-language-model “fill in the blanks” pretraining (BERT and subsequent works), generative pretraining (GPT 1, 2 and 3) or sequence-to-sequence (T5, BART). It is key to understand language because it can be trained unsupervised, so it has significantly less cost than supervised training. I can only imagine the use of pretraining will grow over time. As for GPT3 specifically, the few-shot results are honestly impressive on a range of tasks. At the same time, the model is so large that it cannot be easily fine-tuned, so it’s quite difficult to apply it to a downstream task.

Smart Speakers

Giorgio: I know you spent a lot of energy trying to run Almond on a vast range of personal computer platforms, focusing on the Android app as a common “personal computer”, maybe because smartphones are the personal computers in this era, for common people. Besides, one of the possible weaknesses I see in Almond is the absence of a (home-based) voice-based interface, maybe through an open-hardware smart-speaker? Do you have any plan to allow private citizens to interface Almond through a smart speaker or any voice-based platform? What are the pros and cons of voice-first interfaces?

Giovanni: We absolutely see Almond on the smart speaker as a first-class citizen. Since the fall of 2019, we have partnered with Home Assistant to bundle Almond as an official add-on, so you can use Almond to control a Home Assistant-based smart speaker. That means one can build a fully open-source voice assistant stack using a Raspberry Pi, Home Assistant OS, and Almond. There are a couple of challenges in using Almond with a pure voice interface, mainly around the wake word, for which there is no easy to use open-source solution. (Recently, we discovered Howl from UWaterloo, which is also used by Firefox Voice, and we’re investigating that). Also, building a conversational interface that is friendly to pure speech output is not easy. Even commercial assistants work better on a phone when they can display links, cards, and interactive interfaces.

Giorgio: Almond seems now focused on providing an assistant to private citizens (end private users). The distributed architecture and the access control management you propose for end private users couldn’t be applicable also to business companies that want to provide their services to people? I imagine a scenario where an end user’s assistant talks to a company-assistant. May this Almond possible extension in the future be coupled with Thingpedia APIs?

Giovanni: Of course! The goal of our research prototype of a distributed virtual assistant was to show how useful access control can be in natural language. The use cases need not be limited to consumer access control: it could be applied in corporate settings, and it could be applied to sharing data between consumers and businesses. For an example of the latter, see this paper from HTC & NTU which uses ThingTalk technology to audit the sharing of medical data.

The Future

Giorgio: Recently Almond received Sloan Support. As far as I understand, the new funds will support the engineering of Almond solutions, with the goal to convert the developed prototypes into real products usable by consumers. Can you give more details and describe the next steps of the project, in the short term and in the long-term?

Giovanni: The short term goal, in the next year, is to use the funds from Sloan and other foundations to build an initial product. We aim for a small initial user base of enthusiasts who care about privacy. The long term goal is then to use this initial product to further fundraise, and then use the established product to build both a successful open-source community and an ecosystem of companies using Almond technology in their products. This should allow Almond to thrive and become self-sustainable.

Stanford Scientists Are Developing An Open Virtual Assistant