Google Assistant Beats Alexa and Siri at Answering Complex Questions: Study

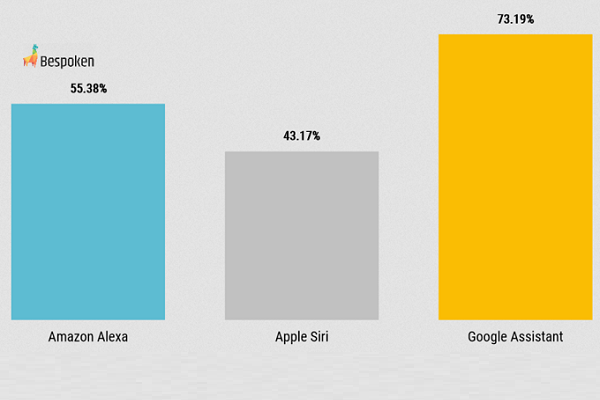

Google Assistant is better at answering complex questions than either Siri or Alexa, according to data published by Bespoken. The result of a study using Bespoken’s recently developed Test Robot for voice-based hardware and software, the study demonstrated how, while capable, all three popular voice assistants still lack perfect comprehension and ability to handle a full range of interactions with people.

Google Assistant is better at answering complex questions than either Siri or Alexa, according to data published by Bespoken. The result of a study using Bespoken’s recently developed Test Robot for voice-based hardware and software, the study demonstrated how, while capable, all three popular voice assistants still lack perfect comprehension and ability to handle a full range of interactions with people.

Complex Q&A

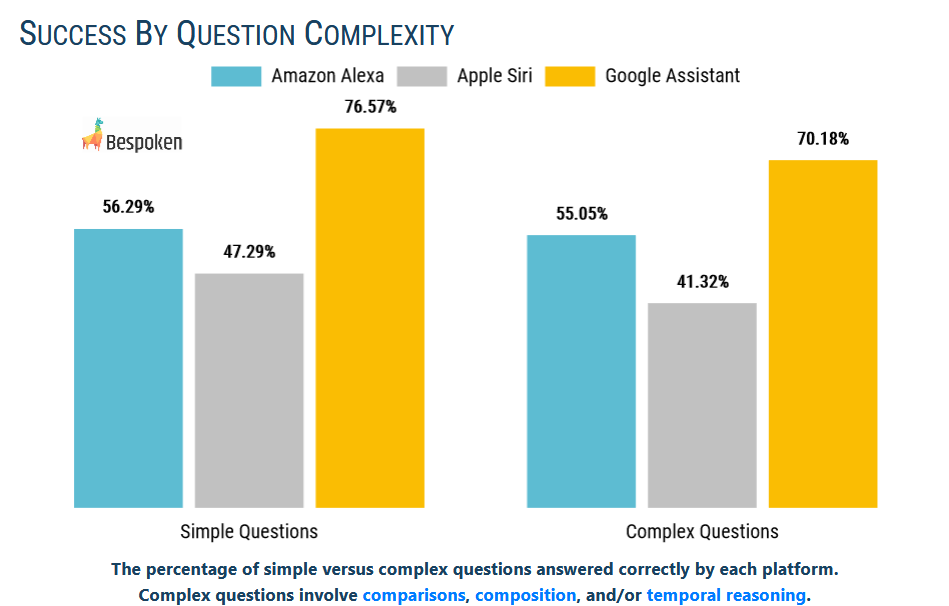

For the study, Bespoken set up an Amazon Echo Show 5, an Apple iPad Mini, and a Google Nest Home Hub. The researchers asked each voice assistant a series of questions. They defined each question as simple or complex and sorted them into subjects like movies, geography, and history. Each question had a different style of complexity, from multiple subjects, a non-grammatical series of keywords, and questions with no answer like asking who the first person was on Mars. The percentage of correct answers each voice assistant gave varied depending on the subject and type of question, but the rankings were uniform. Google Assistant performed the best, with Alexa in second place and Siri lagging behind in third. Notably, all three performed only slightly better with complex questions than simple questions, suggesting their limits have nothing to do with understanding what is asked.

“We have two major takeaways from this initial research. “First, while Google Assistant outperformed Alexa and Siri in every category, all three have significant room for improvement,” Bespoken chief evangelist Emerson Sklar told Voicebot. “These results underscore the need for developers to thoroughly test, train, and optimize every app they build for these voice platforms. Second, this process was completely automated, and we plan to continue to run these tests as well as introduce new benchmarks. This sort of automation enables not just point-in-time measurement but also continuous optimization and improvement. We know Google, Amazon, and Apple all embrace this, and we encourage others to as well.”

Future Test

The test is the first original research by Bespoken, who first debuted the Test Robot in April. The robot extends Bespoken’s virtual diagnostic tool into the analog realm as well. It mimics human speech to see how voice apps built on different voice platforms and hardware respond. The company’s clients can then use that data to refine and upgrade their creations. It’s flexible enough for use in cars and other specialized devices with voice AI, as well as third-party apps on the major platforms, saving designers thousands of hours in manual testing. With this first test a success, the company plans to make it a regular check-up, with expansion into new areas such as automated speech recognition, personal assistants, and interactive voice responses.

“Our tools are already used by thousands of developers to reliably and repeatably automate interaction with any voice platform – at a small fraction of the cost of manual testing and without ever actually talking to a device – and this capability makes it easy for us to gain real-time, unbiased, and statistically-significant data about each platform’s core behavior,” Sklar said. “The actionable insight our tests generate makes it easy to fix app defects and optimize the conversational models themselves to increase successful understanding to greater than 95%.”

Follow @voicebotai Follow @erichschwartz

Bespoken Debuts Test Robot for Voice AI Hardware and Software

Google’s LIT Platform for Natural Language Model Testing is Now Open Source