Rewriting the Moon Landing With a Deepfake Built on Synthetic Speech

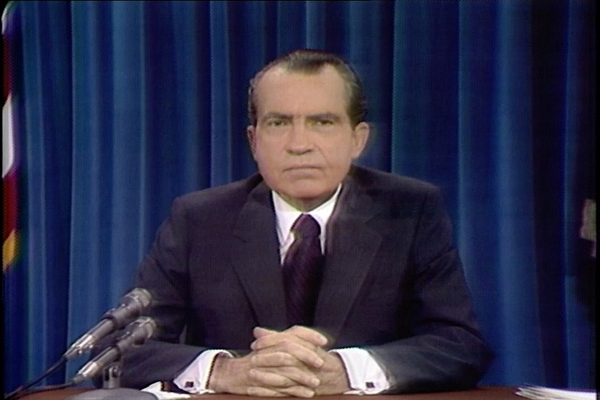

On July 20, 1969, Neil Armstrong landed safely on the Moon and U.S. President Richard Nixon gave a congratulatory speech. Half a century later and visual and voice deepfake technology can give a glimpse at an alternate reality where the landing failed. In Event of Moon Disaster, a project from MIT’s Center for Advanced Virtuality tells that story while showcasing just how advanced deepfakes have become of late.

On July 20, 1969, Neil Armstrong landed safely on the Moon and U.S. President Richard Nixon gave a congratulatory speech. Half a century later and visual and voice deepfake technology can give a glimpse at an alternate reality where the landing failed. In Event of Moon Disaster, a project from MIT’s Center for Advanced Virtuality tells that story while showcasing just how advanced deepfakes have become of late.

Moon Failure

In 1969, no one knew for certain if the Apollo 11 mission would succeed. President Nixon had two speeches written, one for success and one for if the astronauts were unable to return. The group behind the project had a voice actor record the actual contingency speech before using synthetic speech platform Respeecher to apply deep learning techniques and teach an AI to give the speech in the voice of Nixon. To combine the audio fake with a visual, video tech firm Canny AI worked out how Nixon’s face would move if he were giving the alternate speech. Combined, the resulting seven-minute film gives a realistic look at how Nixon might have given the speech had things on the Moon gone wrong.

It’s a bit like a more sophisticated version of how Alexa can speak as Samuel L. Jackson using Amazon’s neural text-to-speech (NTTS) technology. Jackson made several audio recordings that were then used to teach Alexa how to sound like the actor, even when saying sentences he never recorded. It’s not quite as smooth as the Nixon speech, but it does come in both explicit and non-explicit versions, which any expanded Nixon deepfake project would definitely need to consider. Synthetic voice creation is improving rapidly, thanks to not only tech giants like Amazon, but startups like Resemble.ai, which can turn just three minutes of audio into a basic synthetic speech model.

Faking and Fraud

While visual and audio elements of Nixon’s fake speech are not flawless, they tend to reinforce each other enough that a casual watcher might not immediately spot any issues. The older sound and look also help disguise where the deepfake may not be perfect. The video and the accompanying features for the project do make a good point about the relative ease of making a deepfake both audio and visual forms that could fool at least some people. How they can be used and misused is largely the point of the project.

“This alternative history shows how new technologies can obfuscate the truth around us, encouraging our audience to think carefully about the media they encounter daily,” creative director Francesca Panetta said in a statement.

Educating people on how deepfakes are put together is a good way to inoculate them against being fooled by it in audio or video form. In some ways, it’s an extension of how people have become better at spotting photo retouching, at least when not done by a professional. The increasing sophistication of deepfake tech though means it’s a mistake to always take what you hear and see for granted. To combat the potential fraud of deepfake voices, companies ID R&D are designing and improving voice biometric technology, while Google Assistant recently revamped its Voice ID feature to make it harder to trick. Those techniques are useful to limit individual fraud, but it may take a lot of media education to ensure the public isn’t tricked by deepfake tech when watching or listening to the news.

Follow @voicebotai Follow @erichschwartz

Google Assistant Can Better Tell Apart Voices After Enhancing Set Up Process

New Resemble AI Software Turns 3-Minute Records into Synthetic Speech Profiles