More than 1,000 Phrases Will Accidentally Awaken Alexa, Siri, and Google Assistant: Study

Voice assistants will awaken when they hear a wide array of words and phrases that are not their actual wake words, according to a new study from the Ruhr University Bochum and Max Planck Institute for Security and Privacy. There are more than 1,000 such terms that can activate a voice assistant, highlighting an ongoing problem that represents a potential privacy risk for users.

Voice assistants will awaken when they hear a wide array of words and phrases that are not their actual wake words, according to a new study from the Ruhr University Bochum and Max Planck Institute for Security and Privacy. There are more than 1,000 such terms that can activate a voice assistant, highlighting an ongoing problem that represents a potential privacy risk for users.

Unplanned Alert

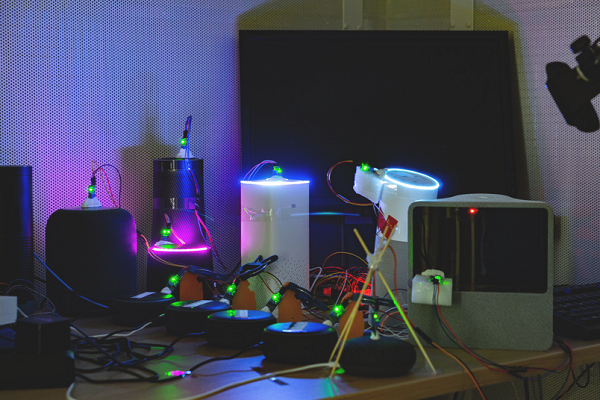

The researchers tested Siri, Alexa, and Google Assistant, along with Microsoft’s Cortana, Deutsche Telekom’s voice assistant, and the voice AIs made by Chinese tech firms Baidu, Tencent, and Xiaomi. The smart speakers were placed in front of several hours of television dialogue, news broadcasts, and recorded speeches. All of the words that awakened the voice assistants that weren’t the wake words were marked by the researchers, with light sensors marking the awakening. The list includes English, German, and Chinese phrases misinterpreted by the voice assistants. The phrases sometimes are very close to actual wake words, as with Google Assistant awakening to “OK, cool,” or Cortana by “Montana.” Others seem more of a stretch, like Alexa waking up to “unacceptable” or hearing “tobacco” as Echo.

“The devices are intentionally programmed in a somewhat forgiving manner, because they are supposed to be able to understand their humans,” researcher Dorothea Kolossa said in a statement. “Therefore, they are more likely to start up once too often rather than not at all.”

Sometimes the words or phrases would cause the device to send audio to the cloud. Other times, the device would awaken from the mistaken phrase but not submit information to the cloud. All of the findings have been put together by the report’s authors for an upcoming paper titled, “Unacceptable, where is my privacy?” Their conclusions and some of the test results have also been collated on Github for other researchers to peruse.

Mistake Awake

Around two-thirds of voice assistant users have accidentally awakened a voice assistant over a month, according to a survey from January. How often it happens without anyone noticing is unknown, but presumably would only up the percentages. The privacy concerns raised by accidental awakenings are important, especially at a time when so many people are spending more time at home around their smart speakers due to the COVID-19 pandemic. A smart speaker mistaking a work call for a wake word is more than likely to happen eventually. People are already suspicious about where their recordings may end up after the quality control programs run by voice assistant developers came under scrutiny last year. Changing those procedures and making people more aware of how they work may not always be enough to attract and keep voice assistant users. A better solution may be allowing users to adjust how sensitive the voice assistant is, as with Google Assistant’s new hotword sensitivity feature. Finding a way to limit accidental awakenings while still making the voice assistant useful is going to be a delicate line to walk for some time to come.

“From a privacy point of view, this is of course alarming, because sometimes very private conversations can end up with strangers,” researcher Thorsten Holz said. “From an engineering point of view, however, this approach is quite understandable, because the systems can only be improved using such data. The manufacturers have to strike a balance between data protection and technical optimisation.”

Follow @voicebotai Follow @erichschwartz

Privacy Concerns Rise Significantly as 1-in-3 Consumers Cite it as Reason to Avoid Smart Speakers

New German Law Includes Voice Assistants Under Changed Media Regulations