Nvidia’s New Jarvis AI Can Turn Voices into Interactive Faces

Nvidia debuted a new conversational artificial intelligence system capable of turning the audio of speech into a virtual face that accurately mimics speaking movements. It’s one of many models making up the framework, named Nvidia Jarvis, which harnesses Nvidia’s GPU hardware for a range of enterprise uses as companies add and expand their use of AI.

Modeling AI

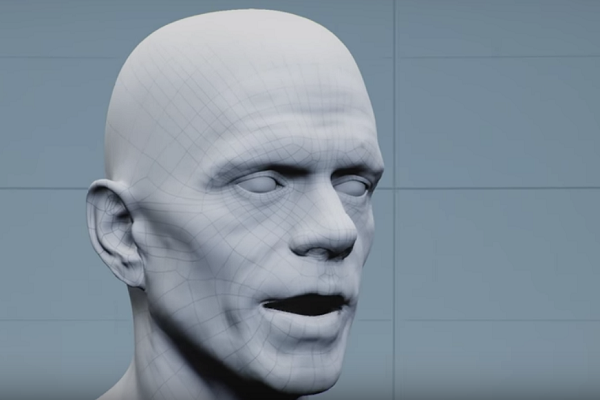

Nvidia Jarvis, likely a reference to the AI assistant used by Iron Man in Marvel movies, can be applied to many industries. At the Nvidia GTC keynote this week, Jensen Huang explained how the framework can use audio data to animate an actual face using a rap performed by an Nvidia employee. The face above moved like it was speaking the words, even without any video to mimic. Huang also showed how it could be used with more cartoonish characters like an animated raindrop, reminiscent of Apple’s Animoji feature.

“It’s quite different under the hood [from Animojis],” Nvidia, product marketing lead for AI and deep learning Siddharth Sharma, told Voicebot in an interview. “[Animojis] are 2-D images. [The AI is] looking at faces then animating a cartoon character. In comparison, [Jarvis is] only using voice data, it’s not using vision at all.”

Jarvis uses that voice data to animate three-dimensional images, with more nuanced skin and muscle movement. Sharma compared it to the kind of motion-capture animation done for movies, except the AI is relying solely on audio information and its own algorithms to create the facial animation. As the AI gathers more data, the face becomes ever more human in its movements. The ‘audio2face’ model, as Nvidia titles it, is only one of the hundreds of pre-trained models available for free, Sharma said. The uniting factor is Nvidia’s GPU technology, which is what makes the AI responsive at a speed that people are willing to use, a 300-millisecond threshold for real-time interactions. That’s critical for any enterprise that wants to apply AI to their business, Sharma explained.

“What customers really need right now is conversational AI that is smarter and more human-like,” Sharma said. “It’s impossible to do without a GPU. On a CPU, it would take 25 seconds.”

Accelerating Change

As with everything else, the current COVID-19 pandemic is changing the role of enterprise AI, speeding up adoption and creating more demand for AI by a swath of industries like education, customer service, and healthcare. According to IDC, spending on conversational AI is expected to jump from $5.8 billion last year to $13.8 billion in 2023. Nvidia’s models set a baseline for those companies to train up their own AI.

“The situation we are in is unique,” Sharma said. We think [Jarvis could help] hospitals and the healthcare sector with COVID, but also in general.”

Nvidia’s models aren’t the only source of enterprise AI, especially during the ongoing health crisis. Nuance recently launched Nuance Mix, a bundle of its conversational artificial intelligence software that lets companies design and build their own virtual assistants. Virtual agent developer Inference Solutions built a whole system for its clients to update their virtual agents with answers related to the pandemic and its effect on the client’s business. Similarly, Satisfi Labs created a pandemic-specific AI platform to answer the questions asked of its event and venue clients.

Even before the pandemic began, Salesforce, one of the largest enterprise companies, started offering ways for clients to design custom voice apps for its Einstein voice assistant platform, and Oracle set up a customizable voice assistant platform for its clients. Meanwhile, Microsoft has all but ended its consumer-facing AI products in favor of making the Cortana voice assistant solely an enterprise service. The pace of adoption for Nvidia’s new models is likely higher than it might have been last year, but it represents the direction enterprise AI has been moving for some time.

Follow @voicebotai Follow @erichschwartz

Inference Solutions Updates Virtual Agents to Answer Coronavirus Questions