In-Car Voice Assistant Consumer Adoption Webinar Replay with Cerence and Full Q&A

Last week, Cerence CEO Sanjay Dhawan joined Voicebot’s Bret Kinsella to record a webinar on the findings of the recent national survey on voice assistant use in the car. Below is the video recording which includes responses to several questions from the audience during the webinar. There were many questions that Sanjay and Bret did not get to in the recording so we captured some written responses to many of those below. If you have additional questions feel free to post them on Twitter to @bretkinsella or @CerenceInc.

Cerence and Voicebot Extended Q&A

| I would be very interested to hear from Cerence if they included any methodology to evaluate the different voice assistants (VAs) with regard to safe usage inside the vehicle when they developed their Cognitive Arbitrator. We have worked with VAs in our driving simulators and have seen substantial differences in the effects they have on drivers, based on the different architecture, the way that tasks are built and by who and also from the way the VA interacts (e.g. how much they talk).

Richard Mack of Cerence: We have not evaluated the effects of multiple assistants on driving behavior in the context of cognitive arbitration. We have focused on evaluating usability and preferences to this point, but this is something we are interested in. Our recent work around safe usage in the vehicle has centered on evaluating different features in our technologies. For example, we found similar eye gaze behavior between a wake-up word, Cerence Just Talk, and push-to-talk interactions. Cerence Just Talk users spend slightly less time with their eyes on the infotainment system than the other methods though. We’ve also found that well-designed projections on the windshields, like the ones we’ve shown, do not introduce significantly more time with eyes off of the road. Finally, we have evaluated the way people interact with VAs depending on the way they speak to the driver. Drivers prefer systems that speak friendly (for example “How can I help you?” versus “Please say a command.”) and are safer with them, likely due to higher usability, less need to look at the screen, and reduced errors. The same is true for systems that allow barge-in, simply talk over the system and not have to wait for it to complete its prompt. |

| Could you guys cover off how/if you’re seeing this behaviour translate into other key markets such as the UK and Germany please?

Cerence: Although the survey is U.S.-based, we believe the behaviors are fairly consistent in European markets, as well as others. Basic utilities, such as navigation, phone calls, and command-and-control, are seemingly universal. Differences will be found more in content and other services that might be more popular depending on where you live. |

| Do you feel it will be useful for drivers to have a skill that lets users get their messages (texts, WhatsApp, etc) while they are driving?

Cerence: Yes, it will be not only useful, but also practical. A number of systems today allow drivers and passengers to have messages read, and some provide the ability to respond. This includes Cerence Drive solutions that are on the road already and have been for several years. Bret Kinsella of Voicebot: Agreed. One of the biggest risk factors today for driving safety is texting. Even with widespread warnings many drivers either dismiss the risk or cannot help themselves. We also see that texting is the third most common voice use case while driving so this is not an isolated problem. By implementing voice text systems for drivers, we can create a safer driving experience for everyone. It may be that the best solution is no texting at all while driving, but given driver propensity for texting regardless of risk, the best solution is to make it available in a safer format that enables drivers to keep their eyes on the road and hands on the steering wheel. |

| The report says “Even more intriguing are use cases for pre-ordering food before arriving at a quick-service restaurant or searching for a product and then asking to navigate to the nearest location where it is available.” Are there companies working on these use cases? When can we start using it?

Cerence: These types of programs are in development using a variety of content sources, location-based services and payment options. While these are in development, we believe they are still a little way off before we have a full ecosystem to provide a seamless experience. Voicebot: We mentioned a few of these in the webinar recording so I encourage you to look at that. Sanjay pointed out gas refills as an obvious option and I focused fast food retailers such as Starbucks and Dunkin Donuts that are already offering this today. So, you can start now. |

| Is this data about built-in voice assistants or any?

Voicebot: All voice assistant use in the car. |

| Do you have data on how much of this is the OEM system and how much is Carplay/Android Auto?

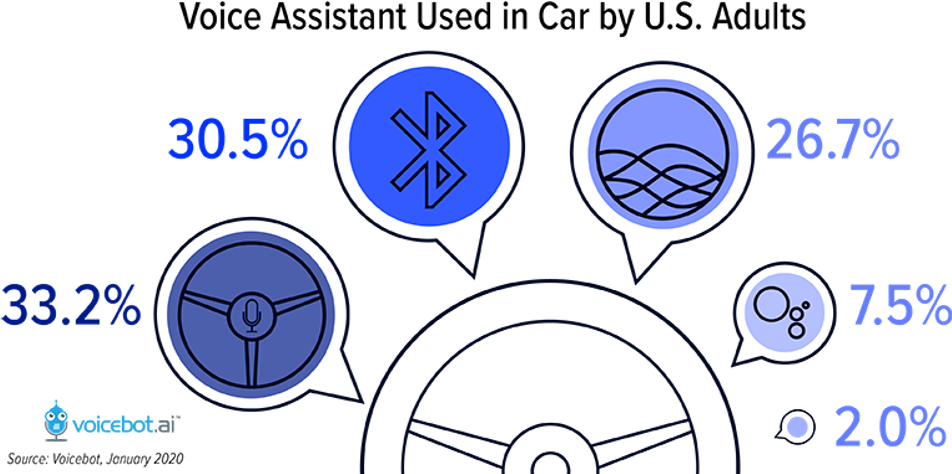

Voicebot: Yes. On page 9 of the report we break down the percent use of embedded assistants versus Bluetooth, Apple Carplay, Android Auto, and Alexa Auto. The chart is here for your reference.

|

| What types of transactions are happening with the Voice Assistance in the car? Is it mostly directions? Calling someone? Response to texts? Do you have data on that breakdown?

Cerence: In our user studies, we see drivers state that they expect to use Navigation, Music, Phone Calls, and Text Messages the most, respectively. But, when we’ve observed drivers “in the wild,” we see that the most use cases are radio, text messages, phone, and weather by voice. When we include how often they complete tasks by voice and by touch, the top use cases are Phone, Radio, Navigation, and Text. Voicebot: There is additional information from our national survey in the U.S. on page 15 of the report. Phone, navigation, texting, and music have topped the list for the past two years. |

| Is the voice linked to security to the owner of the car?

Cerence: Good question and a few things to note: These assistants are not linked to the owner of a vehicle. However, Cerence does provide the ability to add voice biometrics to a vehicle. As the systems evolve and the use cases expand, there is also the potential for biometrics to be implemented for keyless entry and ignition down the road. It’s also with noting that even though usually there is not security in these systems, most will adjust or train to the principle speaker/driver through a profile. |

| Question for Sanjay: what educational efforts (across OEM’s, directly to consumers, to teach capabilities and use) are being envisioned by Cerence?

Cerence: Cerence is working with OEMs across a number of different fronts, both technical and marketing. First, one of the best places for education is when you take ownership of a vehicle. We’re looking to expand or improve the documentation or quick-start guides for a new user. In addition, a growing trend among OEMs is providing training or support when a driver first picks up the vehicle, very similar to an Apple Store. Examples include something as formal as the BMW Genius program or more informal teach-ins while still on the dealer lot. Once you’re in the car and driving it regularly, we are also looking at sending information, such as tips and tricks, through apps, email, social content, podcasts, and more. Second, from a technical standpoint, we are working with OEMs to build lessons, training, prompts, and other mechanisms in these systems. You’ll start to see some of the new capabilities in cars coming to market soon, taking a page of out the smart speaker and smartphone playbooks. |

| Do the numbers for how consumers access voice include the fact that Alexa is included in the vehicle’s embedded system (e.g. BMW)?

Voicebot: Yes. Consumers were asked which assistants they had used while driving. |

| I develop Alexa Skills and is there a differentiation from using Alexa Skills within a car versus more broad-based skills like “Alexa, turn on porch light”?

Cerence: Alexa skills are supported in the Alexa ecosystem. It is up to Amazon to decide how they manage regular skills vs. in-car skills. Cerence is offering an open architecture for assistant solutions, helping OEMs build their branded car assistant experience, but also enabling connection to other assistants, using Cerence’s Cognitive Arbitration or voice routing technology. Voicebot: Alexa skills created for the smart speaker environment today would also be accessible through Alexa Auto in the car environment. Where you would like see a difference is in creating multimodal skills with screens using Alexa Presentation Language (APL). I would expect to see more context-specific Alexa skills and features in ASK in the future. |

| How do you see third party systems working with in-car voice systems? (now and/or going forward)

Voicebot: Right now it is the wild-west. Most cars offer multiple voice assistant connection options. Each has a different role in consumers’ lives so automakers are accommodating the preferences of different people but also now recognizing that many are comfortable using multiple assistants in their daily routines. There is some concern about the cognitive load on drivers to discern which assistant to use for which task, but automakers today are biasing toward consumer choice even though they’d prefer the driver chooses the embedded assistant first. Cerence’s cognitive arbitrator solution which enables a single voice input assistant to recognize user intent and then invokes the appropriate assistant to fulfill the need is an interesting option to make this simpler for drivers. Cerence: Cerence believes third-party systems working with in-car voice systems is critical for the proliferation and use of these systems. The ability to support multiple assistants, content services, domains is essential. Unlike a speaker or phone, a car is one of a person’s biggest purchases, so there’s a natural desire to have it support as many capabilities as possible. For example, I’m an iPhone user with an Alexa home speaker, working on PC, listening to Spotify and Pandora, etc. As all this content converges in a car. I want my system ready to support it all. An open ecosystem is the only way. |

| How does [the embedded] voice assistant identify how many passengers are in the car?

Cerence: The Cerence Drive offerings can take advantage of the audio microphone setup to understand the seating situation in the car. It can not only tell how many people are in the car but also detect which passenger is talking and manage the audio input channels as required. Another way to accomplish the same goal is by integrating with other car sensors, such as the seat weight sensors which are there to control additional functions like airbags. |

| Search not a top use case in the car?

Cerence: Not really as the study suggests. Think about things that are the most common tasks when driving: navigation, using controls, and talking on the phone. However, over time and especially as autonomous driving becomes more prevalent, we believe search will inch its way up. Because of this, Cerence is working a number of location-based services that combine different inputs, including voice and eye tracking, to ask questions, such as “What time does that Starbucks close?” or “What is the Yelp rating of that pizza shop?” On top of that, we are offering solutions, such as General Knowledge Question Answering. This is different than what we know as web-search in a browser and more about providing quick answers to questions in a conversational way, e.g. Q: “What is the Capital of Nicaragua?” A: “Managua is the capital and the most populated city in Nicaragua.” Voicebot: Asking general knowledge questions was sixth on the list of most frequent uses of voice assistants while driving. More directed searches such as for finding a restaurant or asking about products were further down the list. Music search likely is much higher but is embedded in the use of voice for music streaming or radio which were the fourth and fifth most frequently use cases. |

| I’m curious if Sanjay and his team are working on any tools that isolate a person’s voice in the car through acoustic audio subtraction. If not his company, what other companies might be focusing on this problem?

Cerence: Yes, the Cerence Drive solutions include a feature called PIC: Passenger Interference Cancellation. This feature allows a person from any seat to talk to the assistant while ensuring other passenger voices do not interfere. Another great use case for the same feature is phone conference calling, allowing the driver to ensure his colleagues on the other side of the call cannot hear the kids’ singing in the back or deciding which specific seats participate in the phone call. In-Car Communications is another Cerence feature, manipulating audio input and output to ensure that in three-row vehicles, the back seat can hear the driver without forcing the driver to turn her head or to scream and, vice-versa, the driver hears the back row thanks to amplifying their voices in the front (driver’s) speakers. And Speech Signal Enhancement (SSE) is another solution from Cerence that can process the signal from multiple microphones to remove the non-speech noise and to combine the signal of the microphone to “steer” them towards only one of the voices. |

| Most OEMs don’t yet have a card on file with the consumer. How do you see consumers establishing a walleted card/identity for voice commerce?

Cerence: Cerence is working on different payment options. The OEM need not have a card on file, but the driver could have saved payment options or preferences stored in a digital wallet similar to other electronic payment systems that you might find on your phone for example. Keep an eye out for news from us soon. |

| Might the fact that voice assistance is not a major influence on purchase decision be due to the fact that voice is increasingly expected? It’s now normative? Your thoughts, please.

Voicebot: Actually we found that it was a significant factor at least 20% of the time with over 60% saying it is at least a consideration. For a major purchase like an automobile, that is an important finding. No automaker wants to lose a sale because they don’t support a voice assistant the is critical to a purchase decision. That means they will offer many options as they do today despite preferring that drivers use the embedded assistant. However, I see this tracking general voice assistant use. As voice becomes a more commonplace user interface, the availability to specific voice assistants and features will rise even further in importance. Cerence: I think the fact that it has at least some bearing on a purchase decision is a positive sign, especially for such a big purchase. Also, the sentiment is likely skewed a bit because voice is often categorized with the overall digital or infotainment experience where voice might not be viewed separately. |

| What makes the user WANT TO USE the assistant more, how can they be directed to use more?

First and foremost, users expect a system that helps them get the results they want as effortlessly as possible. When they learn it works well for navigation, media, phone, and texting, they want to use it for more advanced features. If they feel a system helps keep them safe and helps them take care of their vehicle, they move onto wanting to use the system to keep them entertained and make them more productive. In our experience, users want to use the system when it transitions from being a novelty to a real utility. We’ve all had the experience where it’s fun to talk to a car, a phone or a speaker. Regular use really takes off when people find real value in the system. I cannot imagine dialing the phone or using my nav system with anything other than the voice assistant when driving. We are conducting User Experience and Usability research in our Cerence DRIVE Labs to explore ways of encouraging and motivating users to adopt the in-car assistant solutions. It involves concepts like discoverability and trust. The insights from these studies are being implemented in Cerence Drive offerings. Voicebot: I agree with the sentiment about getting results. That is based on a principle of cognitive trust and was an issue with many of the inflexible and lower accuracy voice assistants offered by automakers in the past. The newer solutions certainly deliver on core expectations better and offer a broader array of services. However, the bigger driver of adoption is likely to be increased use of voice assistants throughout the day which will make voice the first option as opposed to a complementary interface. |

| How does Cerence see the ‘multi-agent’ experience and architecture developing in the car? For example, using Alexa for certain domains vs. OEM native assistant for telematic controls, or if certain family members prefer Alexa vs. Google. What is the expected user experience to configure and interact (e.g. wake word) with multiple agents?

Cerence: This is the beauty of Cerence’s Cognitive Arbitration: our ability to route requests to virtually any agent, content provider or service supported in the car. Users want an open system that supports their total digital lifestyle and not a closed system. |

| What is the plan for a natural language embedded voice assistant?

Cerence: This exists today! Cerence Drive solutions are based on state-of-the-art hybrid technology. They include both cloud AI and embedded AI, including statistical ASR language models and statistical NLU (Natural Language Understanding) models. In fact, such solutions have been on the road for several years in multiple car models, allowing the drivers and passengers to interact with the assistant, using natural language, even when connectivity is not available. |

| Do you see a future in “unprompted” voice interacting with the user? For instance, the application speaking an audible alert to the user (i.e., navigation alerting of an accident up ahead, severe weather alerts in the area, Amber alerts, etc.)?

Cerence: Yes, just like turn-by-turn voice instructions are already part of every navigation system, it is legitimate to consider adding additional use cases for proactive notifications by the assistant (what the question calls “unprompted”). The key to doing it right is a research-based UX design, considering elements like distraction and acceptance of such behaviors by end-users. This is part of the mandate of Cerence Drive Lab, and we already have a lot of insights and work in that direction. We’ve done nearly half a dozen user studies on the topic of the system being more proactive, ranging from unprompted voice alerts to visual alerts that let the user know the system wants them to engage it to hear about something that isn’t as urgent. The acceptance of this is highly based on the context of the situation, and if it is safety-critical to the driver or the vehicle. Some examples outside of safety-critical are ones help them avoid traffic or ease parking. |

| Is there an opportunity for Cerence to work closer with Big Tech like Google / Amazon?

Cerence: 100%. We do today and will continue to do so. It’s not Cerence or…but Cerence and when talking about the big providers. Keep in mind also that when you go outside the US, there are a number of others that also are in the equation, like Alibaba, Yandex, etc. |

| What do you think about the voice assistant’s voice? Is it similar to a human voice?

Cerence: It all depends on the system and what has been built. Cerence offers a whole range of voices and languages. Some are virtually indistinguishable from the human voice; others will be a little lower-quality to be able to run on more basic systems that don’t necessarily have the processing power, acoustics or price point to support something different. Also, as an aside, we recently launched Cerence My Car My Voice that allows people to clone a voice that can be used as the voice of the assistant. You can have your spouse or best friend speak to you through the car. This is available in China now and will make its way West very soon. Voicebot: Synthetic voices have improved a great deal over the past few years. Sometimes, particularly for short interactions, consumers can be fooled into thinking a synthetic voice is from a real person which some Voicebot research from 2019 confirmed. However, the opposite was also occasionally true. Some people mistook a human voice for synthetic. The more important development from our perspective is that consumers have become comfortable with the synthetic voices commonly used today in voice assistants. They are humanlike enough to not be distracting and even help build emotional trust with users while also being effective enough at communication to be accepted despite those times when synthetic voices are clearly machine-generated. |

| How much do you feel driver stats (alert level, capacity, etc) would remain relevant as fully-autonomous readiness becomes more prevalent?

Cerence: As autonomous takes hold, it’s likely driver stats become less relevant. However, what will explode are the content and services available through the vehicle. Voice and other modes of interaction tied into a broad and open ecosystem will be huge. |

| Does Cerence plan to adopt more sophisticated NLU designs such as Bert?

Cerence: Yes, Cerence is deep into deep learning. We use deep neural nets across all platforms and have a research team always investigating and implementing the latest innovative technologies. BERT is one of them; this is a core part of Cerence DNA. |

Follow @bretkinsella Follow @voicebotai