Alexa is Learning to Speak Emotionally

Alexa developers can inject some emotion into the voice assistant thanks to a new feature that enables the voice assistant to simulate certain feelings in its responses. In tandem with the emotional responses, Amazon has also added some new speaking styles to Alexa, adapted to sound more natural when discussing either news or music.

Emotional Alexa

The Alexa emotions offer voice app makers the choice of having the voice assistant sound happy and excited or sad and empathetic. It’s not a binary choice, as both options include three levels of intensity. To give Alexa’s responses the right emotional tone, the voice assistant applies Amazon’s neural text-to-speech (NTTS) technology, mimicking the intonations of human speech and making the result sounds more natural than the standard Alexa speech. The results are notably more passionate than Alexa’s usual voice of cool, slightly inhuman reason. You can almost feel the enthusiasm in the highest intensity of happiness emotion and the disappointed voice makes it Alexa sound like it is giving up on the user’s future. According to Amazon, the emotional response improved overall customer satisfaction by 30%.

The speaking styles are similar to the emotions, except they apply to an entire skill. While the styles are limited to news and music at the moment, Amazon has said it is working on additional options for Alexa. When activated, the speaking styles are designed to invoke the way television news anchors speak, while the music style is clearly aimed at making Alexa sound like a DJ on a popular radio station. Changing the speaking style makes Alexa seem more human, according to Amazon, with the news style rated as 31% more natural than the standard Alexa voice and the music style rated as 84% more natural.

Emotional AI

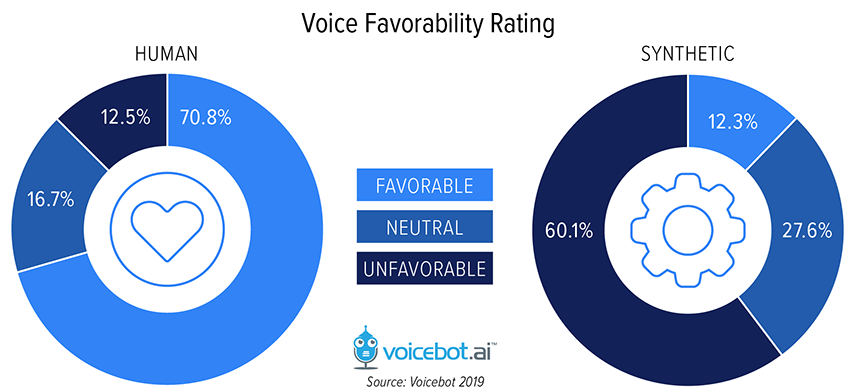

Making voice assistants sound more human isn’t an unimportant style choice. Data collected by Voicebot has found a clear preference for human voices over synthetic ones, with inverse favorability ratings as can be seen in the chart above. That preference impacts how well people remember what they hear. According to Voicebot’s survey, people remember a website or other call-to-action correctly in an audio clip from a synthetic voice only 13.4% of the time. That’s less than half of the 32.5% of people who correctly recalled it in a clip of similar length spoken by a human.

As for the best times for Alexa to include emotion in its response, Amazon suggests that voice skills for games and sports would be the most obvious venue. Alexa could sound excited and happy when the user is playing a game or there’s been an exciting sports event. Conversely, telling someone they lost a game or that their local baseball team lost may be easier to take if the one telling you the bad news sounds like they are sad about it too. There are many ways that an emotional Alexa would be useful, although it may require more than the one-dimensional range of happiness currently on offer.

Applying emotion to AI and voice technology is an ongoing project beyond the new Alexa features. The auto industry, in particular, is looking at ways to incorporate technology that can both determine the emotional state of a person and respond in the most appropriate way. Nuance Automotive, which is now an independent company called Cerence, showed off its own technology in this space at CES in January. An emotional AI for cars is undergoing pilot projects by Toyota as well as Kia and General Motors. Alexa’s emotional response doesn’t adapt to its tone to its circumstances as Alexa can’t read emotions through a smart speaker. However, Amazon is reportedly working on a smart wristband that can detect emotions, which could lead to making Alexa match how it speaks to the emotions of people around it.

Follow @voicebotai Follow @erichschwartz