Google Assistant Wins Another Open Question Test While Apple Siri and Amazon Alexa Improve Substantially

Google Assistant has won another open question test where queries are asked of smart speakers and the results are measured on properly understanding the question and properly answering it. In Loup Ventures now annual Smart Speaker IQ Test, the Amazon Echo, Apple HomePod, Google Home, and Harman Kardon Invoke smart speakers were asked 800 questions and the responses of their respective voice assistants were measured. All four voice assistants–Alexa, Siri, Assistant, and Cortana–understood at least 99% of the queries so the metric that matters is correct answers. Google Assistant was first with 87.9% correct followed by Siri with 74.6%, Alexa at 72.5%, and Cortana with 63.4%.

Patterns Break By Category

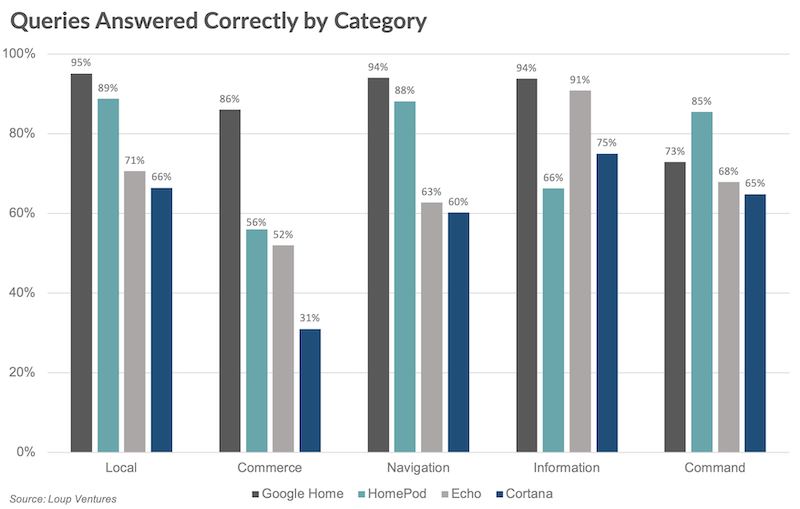

Loup’s analysis further breaks this down by category of question such as Local, Commerce, Navigation, Information, and Command. The pattern of Assistant, Siri, Alexa, Cortana holds except for the Information and Command categories. Siri trails all assistants in the general information category, while Alexa comes in second place very close to Google Assistant. This is a remarkable improvement for Alexa which only scored 68% in a similar test in 2017.

Loup analysts suggest this improvement may be influenced by Amazon’s recent rollout of Alexa Answers which crowdsources answers to questions from device users. Regardless, it is a notable improvement and shows how Amazon can close the gap in a category that plays to Google’s strengths in search. Alexa also showed significant improvement in local search, rising 9%, which is likely attributable to the company’s integration with Yext.

The other category pattern disruption was Siri’s standout performance in the command category. This category includes requests such as controlling smart home devices and setting timers. Loup speculates that Apple’s tight integration between the HomePod and Apple smartphones may have helped it in this category where Siri posted an 85% success rate compared to Google Assistant’s second-place 73%. Apple also excelled in the navigation and local categories. Success in navigation is likely the result of several years’ investment in Apple Maps. The Local category performance may be the result of recent improvements in Siri’s machine learning capabilities which were applied to recognizing proper names of local points of interest.

Closing the Gap with Google

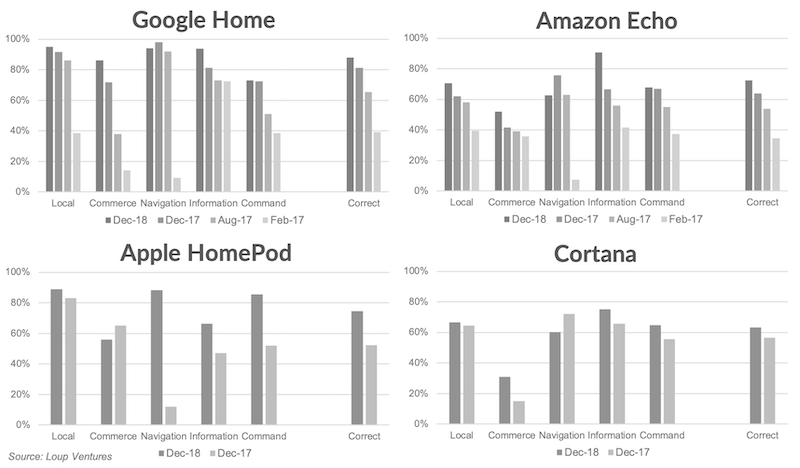

The big story here is less about Google Assistant winning once again, but rather that Siri, Alexa, and Cortana are closing the gap. Loup points out that each of the voice assistants is improving. Since Siri, Alexa, and Cortana were well behind Google Assistant in the August and December 2017 tests, they are improving at a faster rate and closing the performance gap. With that said, two areas where Google Assistant has shown significant improvement since August 2017 are Commerce and Command so it isn’t as if Google had no work to do over the past year.

Voicebot surveys have found that asking questions of smart speakers is the most tried feature by consumers and the second most commonly used on a monthly basis trailing only music listening. The ability to answer questions correctly may be an advantage today, but it doesn’t appear to be a key driver in purchase decisions. Over time, as the gaps narrow further, you should expect this to be even less of a differentiator. You can read the full analysis here.

Follow @bretkinsella Follow @voicebotai

Google Assistant Most Capable Finds Loup Ventures, Cortana the Least

Yext Knowledge Network to Help Alexa Get Better at Local Search

Siri Gets Smarter at Recognizing Names of Local Businesses and Attractions