Pullstring Adds Multi-Modal Support to Converse Platform

Pullstring Vice President Guillaume Privat

Pullstring has announced that it now supports building multi-modal experiences through its Converse voice app development software. Converse is used by agencies and organizations looking to build voice applications in-house. The rise of smart displays is making visual integration into the voice app development process more common. An email interview with Pullstring’s vice president of product, Guillaume Privat, suggested that the company’s customer were asking for new features to support smart visual elements:

“Organizations have begun asking for more screen support. There are two main reasons for this. First, as Amazon is allocating significant resources to marketing the Echo Show and Echo Spot devices, organizations want to ensure they are providing great experiences on these devices for their customers. Second, they are beginning to experiment with building an immersive experience providing more context for future interactions with users. It’s still early days for voice-first screens, but we see a lot of potential here for organizations to build rich user experience with our platform.”

A blog post announcing the new features offers insight into the two main feature enhancements to the Converse software solution.

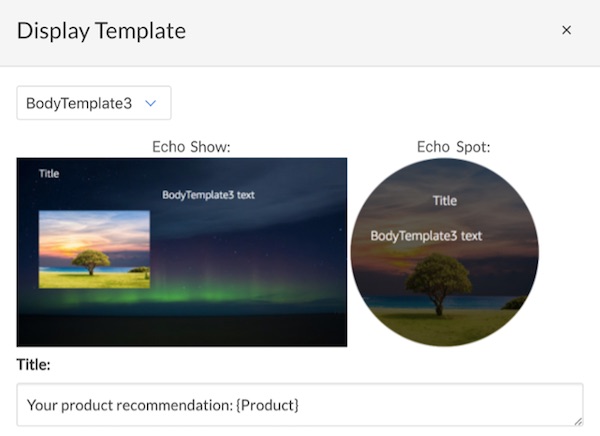

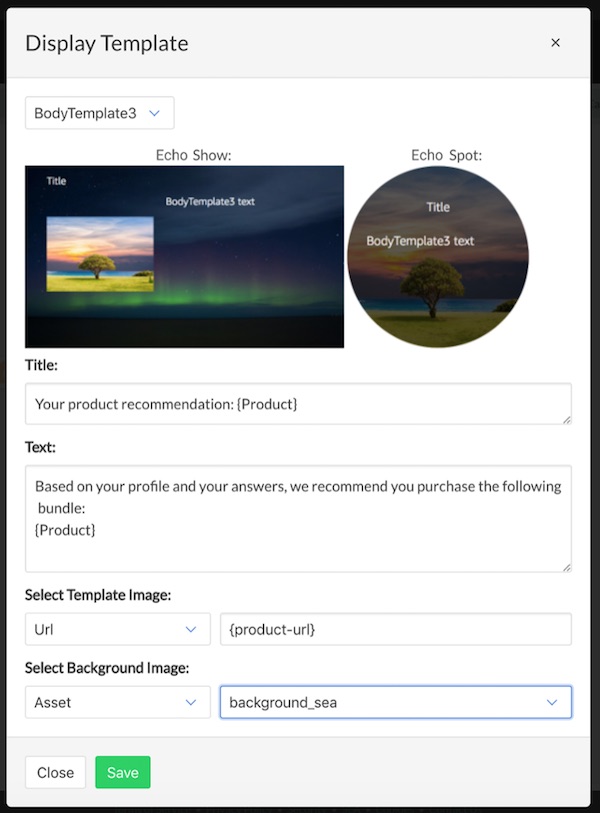

- Home Cards: home cards are displayed in the Alexa companion app or on the Echo Show and Echo Spot.

- Display Templates: display templates will appear on the screen of the Echo Show or Echo Spot and show information while the user is using the skill.

Alexa Today with Google Coming Soon

You will note that the above screen shot is for Alexa support. Google also has multi-modal elements, in particular the use of text presented to users of Google Assistant on smartphones. Mr. Privat said that Pullstring users can utilize multi-modal features for Google Assistant in its previous generation software solution and that Converse support would be coming in a future release:

“PullString Conversation Cloud supports Google Assistant and its multi-modal functionalities; these experiences can be designed using our legacy authoring environment. We’re excited to add support in PullString Converse for Google Assistant in an upcoming release.”

New Features for Debugging and Multiple Environments

The updated software release also included new features to make debugging easier and to operate in multiple environments. For debugging, the focus is on unit testing and enabling a developer to do that on specific parts of a conversation and not necessarily the entire Alexa skill at once. This process automatically executes the conversation logic and displays the log results so developers can identify failure points proactively.

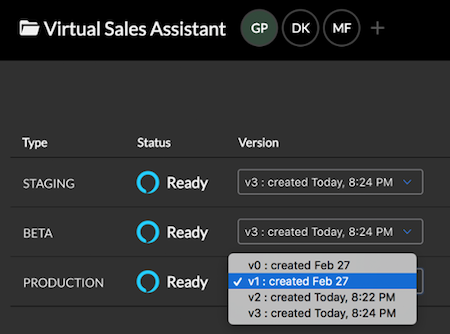

New development environments in Converse 1.2 include staging and beta environments. This enables developers to deploy code to different instances of the voice app in addition to production.

That means you can have an Alexa skill in production, a new version in beta and another in staging that is designed for a new update or waiting for code review. This new feature also offers the ability to swap out different versions and roll-back to previous versions as needed. The environments can also be under different Amazon developer IDs so “a digital media advertising agency could run the staging version of a skill in their vendor ID, but have the production version in their customer vendor ID.”

It was not long ago that almost all development for Alexa skills and other voice apps relied entirely on bespoke code development. Tools like Converse and its competitors are leading to more self-service options for organizations that want to bring these capabilities in-house and know they need tools to manage the development and maintenance processes for voice apps.

Follow @bretkinsella

Now You Can Share Your Custom Alexa Blueprint Skills with Others

Will Smart Displays Be Popular? Mycroft Mark II May Offer Insight.

Pullstring Update Aims to Make Everyone a Voice App Developer