Invite 2000 People to Test Your Amazon Alexa Skill

Amazon announced a new beta feature today for Alexa skill testing. Previously, you had to add someone to your Alexa developer account in order for them to test the skill. This was a significant barrier because that granted the user access to your skill configuration and it was only accessible to technically proficient users. That is typically not the ideal tester profile for consumer oriented voice applications. Amazon Alexa Evangelist David Isbitski had this to say in a blog post today:

You can now invite thousands of users to test your Alexa skill and provide feedback before you publish your skill. By introducing real user feedback into your development process, you can build more intuitive voice experiences. We recommend you ask users to beta test your skill, gather any feedback, then optimize your skill’s voice experience before submitting your skill for certification.

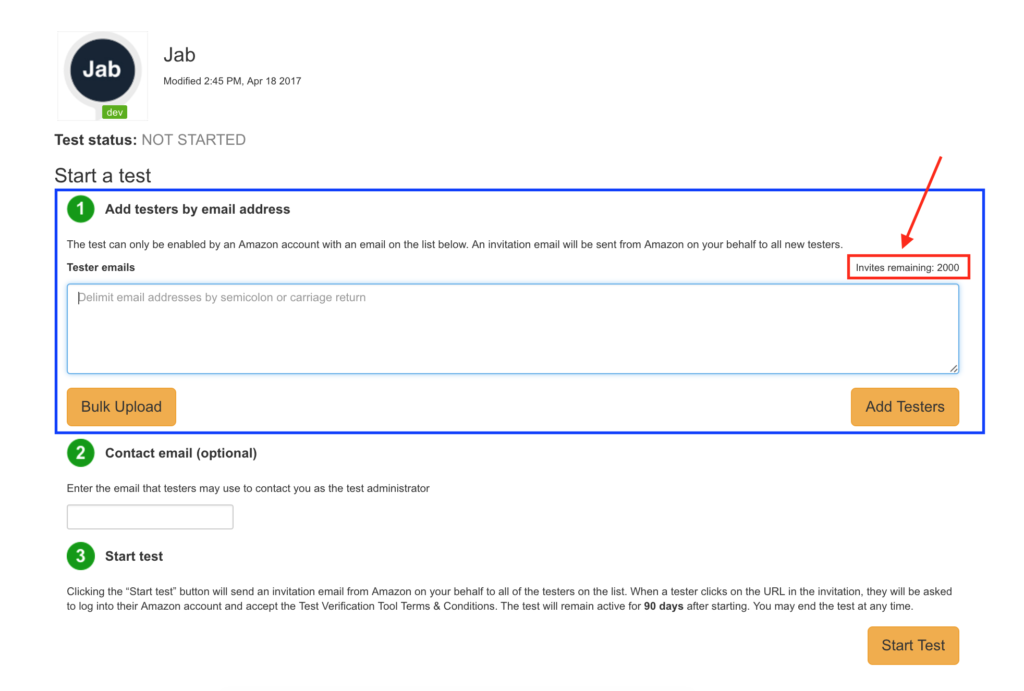

In this case thousands means a cap of 2000 test users. Most developers today are only testing themselves or with one or two other users. The idea that hundreds or thousands of users can provide feedback using their personal devices is a great benefit.

Easy Process for Developers and Users

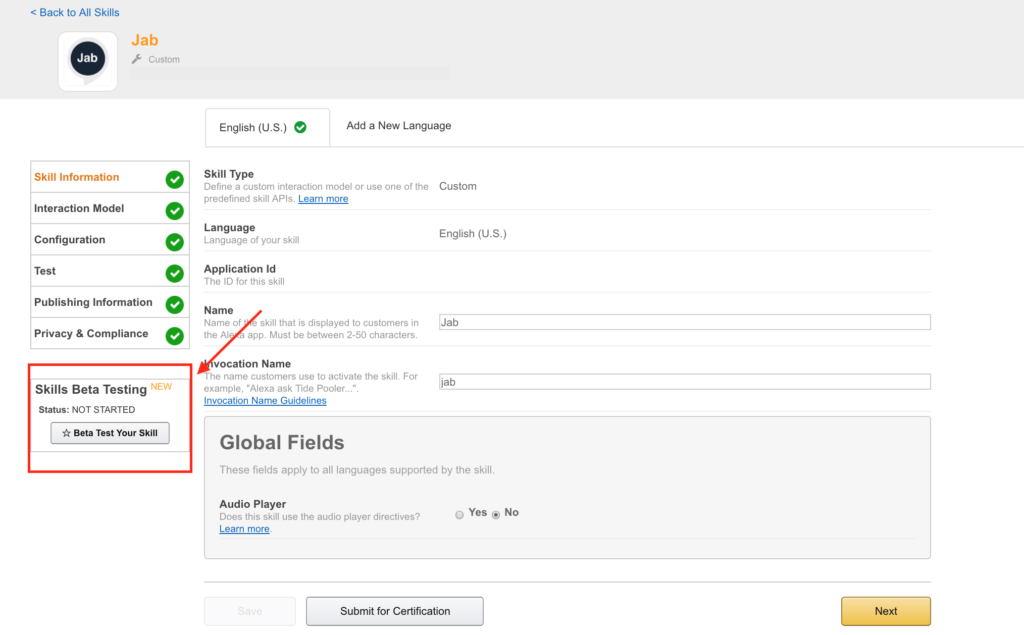

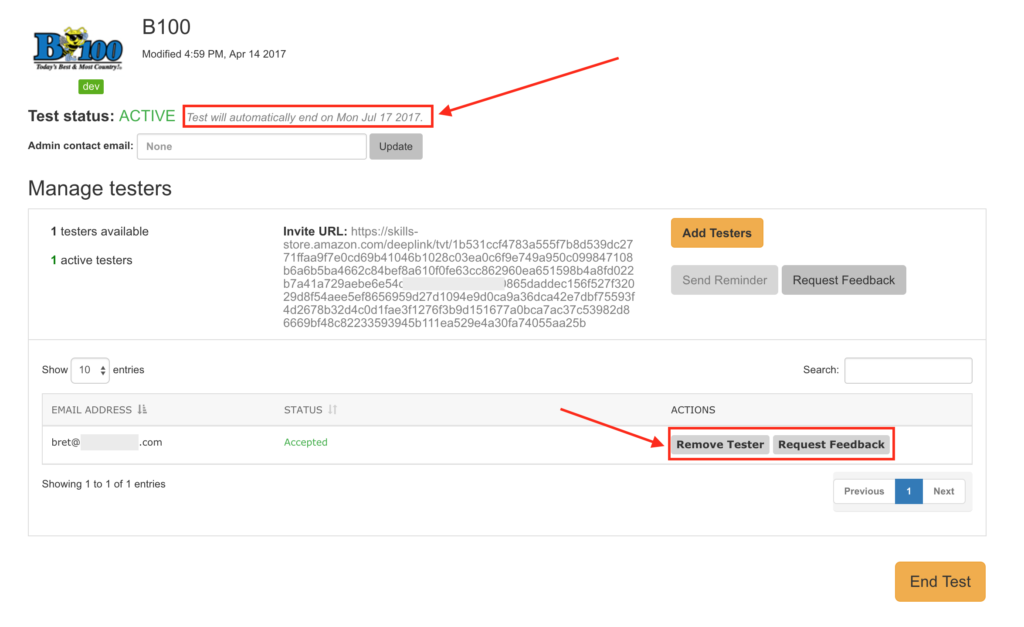

The process to add test users is very easy for developers. They simply need to enable the skill for Beta Testing and then add user email addresses to a form.

Enabling Alexa Skill for Beta Testing

Inviting Users to Test the Skill

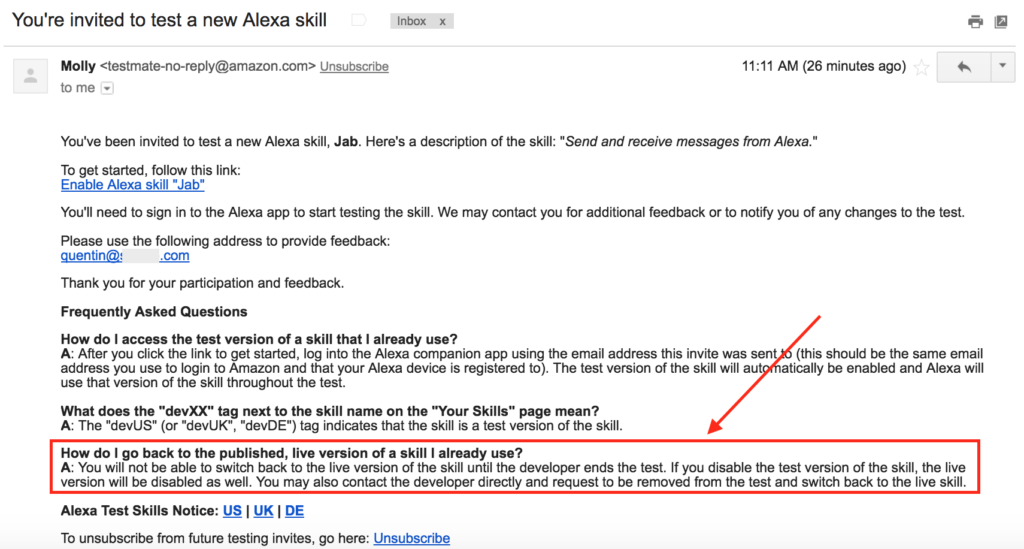

You can see from the form, it enables you to add emails individually or upload the emails in batch — a nice feature when you are adding a lot of contact emails. The user then receives a standard templated email to sign up for the test process. This email is not editable by the skill developer.

It is worth noting that when a user accepts the test, the test version of the skill is enabled and any previous live skill version in their account is replaced. In addition, the user cannot go back to the live production version until the developer ends the beta test that user is associated with. For new skills this won’t be an issue since there is not previous version. However, for developers recruiting current users to test updates, this will be an important consideration. It may be particularly important for skills that are either personalized or keep user history such as game scores, lists or previous questions.

Once a user signs up, the developer can request feedback or remove them individually. Also, each test will automatically end after 90 days leaving the user without access to the test skill. The developer can set that test window shorter than 90 days or end it at any time.

What the User Sees

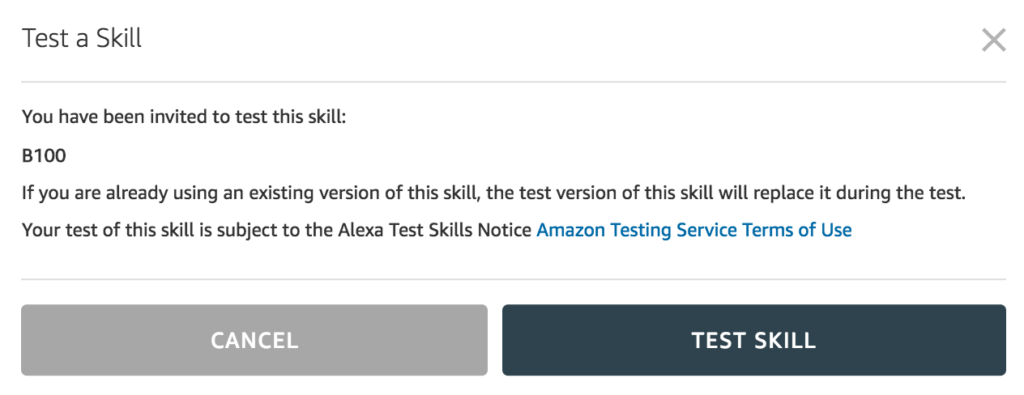

The user clicks through on the invite and is either asked to login to their Amazon account or it takes them directly to the Alexa skill page with an open dialog box.

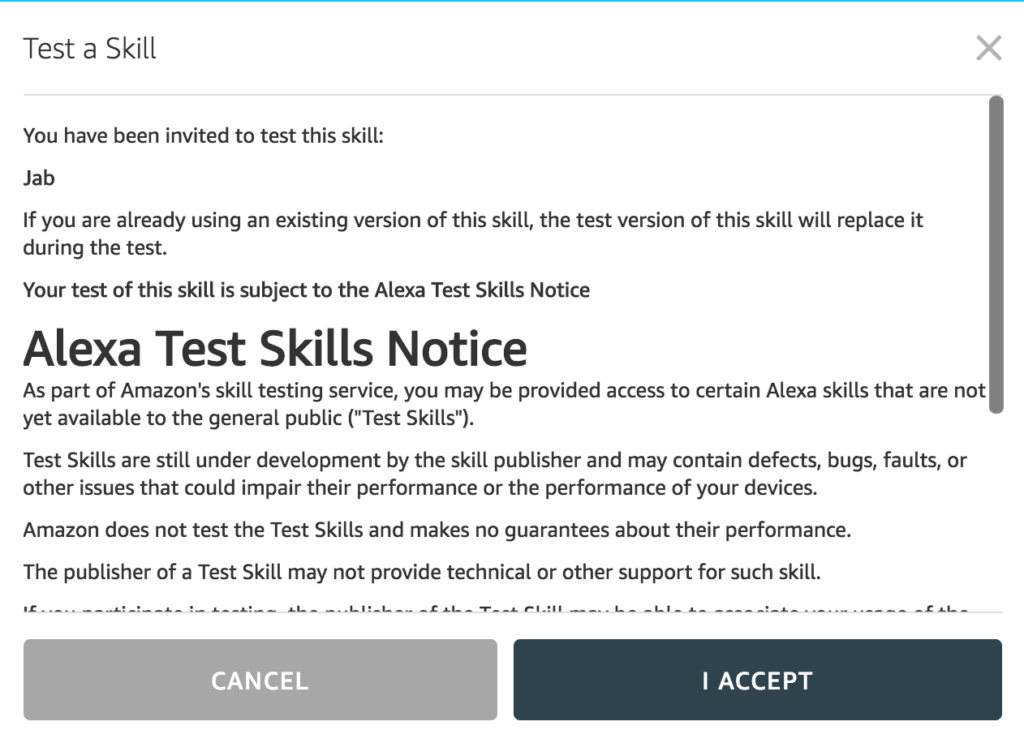

The user then is prompted to accept the terms of the test.

There is an interesting clause in the Alexa Test Skills Notice that says:

If you participate in the testing, the publisher of the Test Skill may be able to associate your usage of the Test Skill with you.

While this makes sense in the abstract, unless you were the only test user, or maybe one of a handful, the developer would have little idea which one was any specific individual. The clause makes sense from a legal standpoint because the developer knows the identity of everyone using the skill, but in practice it is unlikely to be a privacy infringement. In fact, there should be an option where testers are fully known to developers. That way developers can match user feedback to actual session data. The Alexa team can add this to their list of feature requests.

Requesting User Feedback

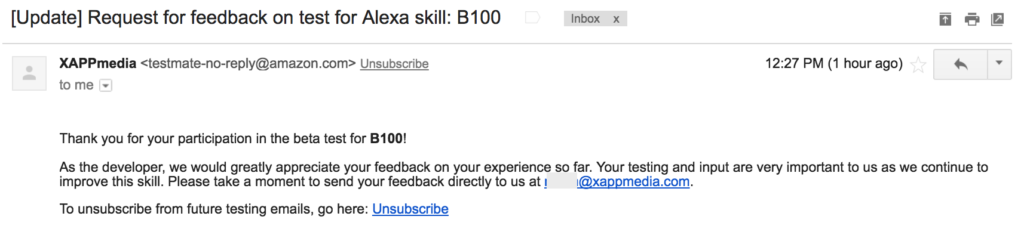

While there is no way to solicit direct user feedback in the system there are three ways developers can gather data from test users. The first is through usage. The test user sessions will be recorded and developers can see aggregate activity through the new analytics dashboards. The second way is that you can push a templated email to the user through the system. Again, this is not an editable email. It simply requests direct feedback from the user.

This format may comply with CAN-SPAM but it has an ineffectual call to action (CTA). If you have a strong tie to your test cohort, it may not matter, but it requires them to click to open an email and then offer free form feedback. A better solution will be to offer more targeted CTA language and have either an embedded form or link to an online form or survey. This brings us to the third option for soliciting feedback. You already have the users’ email addresses so you can email them a survey or form link directly and not be constrained by the system limitations.

Long Needed Feature

Voice user experience is not well understood by developers. As a result, many Alexa skills have poor user ratings and user retention problems. Some of those ratings reflect the lack of skill utility, but most address specific shortcomings in skill performance or design. Design can help avoid issues, but testing at scale often reveals unexpected problems and opportunities that can be addressed before the skill is available to the general public. That can reduce the risk of accumulating of negative feedback. While the new Amazon Skill Builder Beta will likely get more press coverage, the new Beta Skill Testing is the more important feature for developers.

Amazon’s announcement last week of the availability of its far-field microphone array for developers followed by the testing feature today are clear market signals. Amazon is committed to helping third party hardware and software developers build better user experiences for consumers.

Follow @bretkinsella

Editors Note: Thank you to Quentin Delaoutre, developer of the Jab Alexa skill, and Michael Myers from XAPPmedia for their insights in developing this article.

Why Notifications Are Critical for Google Home and Amazon Echo

Amazon Makes Alexa Microphones Available to Developers, Cannibalizes Own Hardware Sales