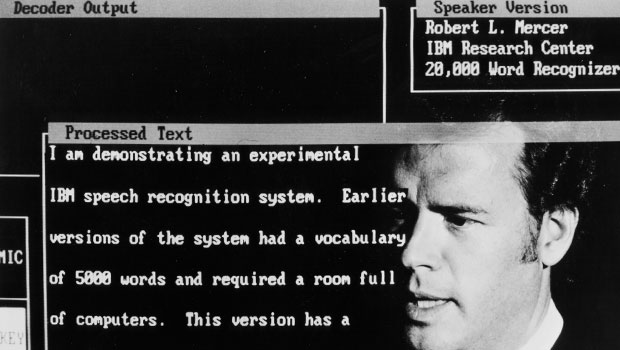

IBM Claims New Speech Recognition Record for Watson

IBM claims a new speech recognition record for Watson in a white paper published on March 6th through the Cornell University Library. George Soan, a principle research scientist at IBM, elaborated on the research in a blog post last week. There are two key data sets (i.e. corpora) that many automatic speech recognition solutions test against: SWITCHBOARD and CallHome. IBM achieved scores of 5.5% and 10.3% error rates respectively on these data sets. Working with speech technology services company Appen, IBM was able to determine that human-level error rates would be 5.1% and 6.8% for the data sets. Mr Soan commented:

IBM claims a new speech recognition record for Watson in a white paper published on March 6th through the Cornell University Library. George Soan, a principle research scientist at IBM, elaborated on the research in a blog post last week. There are two key data sets (i.e. corpora) that many automatic speech recognition solutions test against: SWITCHBOARD and CallHome. IBM achieved scores of 5.5% and 10.3% error rates respectively on these data sets. Working with speech technology services company Appen, IBM was able to determine that human-level error rates would be 5.1% and 6.8% for the data sets. Mr Soan commented:

Reaching human parity – meaning an error rate on par with that of two humans speaking – has long been the ultimate industry goal. Others in the industry are chasing this milestone alongside us, and some have recently claimed reaching 5.9 percent as equivalent to human parity…but we’re not popping the champagne yet. As part of our process in reaching today’s milestone, we determined human parity is actually lower than what anyone has yet achieved — at 5.1 percent [on the SWITCHBOARD corpus].

Beyond SWITCHBOARD, another industry corpus, known as “CallHome,” offers a different set of linguistic data that can be tested, which is created from more colloquial conversations between family members on topics that are not pre-fixed. Conversations from CallHome data are more challenging for machines to transcribe than those from SWITCHBOARD, making breakthroughs harder to achieve. (On this corpus we achieved a 10.3 percent word error rate – another industry record – but again, with Appen’s help, measured human performance in the same situation to be 6.8 percent).

Achieving Human Level Performance Harder Than Previously Thought

Microsoft had claimed human-level parity on the SWITCHBOARD corpus in October 2016 when it reported only a 5.9% error rate. At the time, they claimed it was “about equal” to a professional transcriptionist. The IBM white paper had this to say about the earlier Microsoft claim:

A recent paper by Microsoft suggests that we have already achieved human performance. In trying to verify this statement, we performed an independent set of human performance measurements on two conversational tasks and found that human performance may be considerably better than what was earlier reported, giving the community a significantly harder goal to achieve.

Even though IBM Watson’s automatic speech recognition surpassed the reported Microsoft performance level, the team concluded that human-level parity was still not achieved. Most people agree at this point that the speech recognition is very good for the very best systems. However, when you look at the claims you need to understand what the researchers are measuring against because IBM’s CallHome performance may appear poor at 10.3% even though it represents a known record. Julia Hirschberg, of Columbia University, put it this way:

The ability to recognize speech as well as humans do is a continuing challenge, since human speech, especially during spontaneous conversation, is extremely complex. It’s also difficult to define human performance, since humans also vary in their ability to understand the speech of others. When we compare automatic recognition to human performance it’s extremely important to take both these things into account: the performance of the recognizer and the way human performance on the same speech is estimated. IBM’s recent achievements on the SWITCHBOARD and on the CallHome data are thus quite impressive.

So, what does this mean? IBM has a very good speech recognition solution for Watson. Amazon, Google, Apple, Houndify, Microsoft and others have a new benchmark to target.