Cathy Pearl – The Secrets of Voice UX From A Practitioner’s Point of View

Cathy Pearl is the Director of User Experience at Sense.ly, the author of the new book from O’Reilly, Designing Voice User Interfaces, and a scheduled speaker at the upcoming Virtual Assistant Summit in San Francisco in late January 2017.

Cathy Pearl is the Director of User Experience at Sense.ly, the author of the new book from O’Reilly, Designing Voice User Interfaces, and a scheduled speaker at the upcoming Virtual Assistant Summit in San Francisco in late January 2017.

The name of your talk at the Virtual Assistant Summit is: Virtual assistants in health care will they assist or replace humans? So what is the answer?

Cathy Pearl: For certain tasks, yes. Sense.ly has a virtual nurse avatar. We have no intention of replacing nurses or assistants, but we would like to enhance what they can do. If you are a nurse, you cannot monitor 500 heart patients every day. Our goal is to fill that gap so you can interact with those patients and have a link back to the doctor. So, we definitely don’t want to replace humans, but to help them.

Tell me about your background and how you got into UX for voice.

Pearl: I have an undergraduate degree in cognitive science — a combination of psychology, AI, and linguistics. I earned a masters degree in computer science but began to focus on human-to-computer interaction. The question for me was, “Why don’t we design things for how we actually think and work?”

I then became a software engineer for awhile and wound up at Nuance Communications. After some time at Nuance I transferred from being a software engineer to a full time designer for IVRs and other voice systems. I was there for eight years and then did some speech work at Microsoft and decided I didn’t want to do speech anymore. Then, Ron Croen, the founder of Nuance, called me up in 2011 and asked if I would come see his demo. I saw there was a new way to use voice, not just to keep customers away from using human agents.

We built a choose your own adventure conversational path for the system and I saw this was a new way to do voice and was interested. I once more tried to get away from voice after that, but started doing some more consulting and then met the CEO Sense.ly bringing me back. I also just finished writing a book for O’Reilly about designing voice user interfaces.

What are some of the fundamentals of voice interaction that people don’t understand?

Pearl: One thing that people miss is the difference of discrete interaction. You know if [a user] pressed a button or typed. If you are designing for voice you have to understand that it is going to fail. What are you going to do when the speech recognition does’t work properly? There are two parts. The speech recognition is first. Even if that works you then have part two. If the natural language understanding works you have to determine if you are advancing the conversation.

If you are building a hotel booking app and you ask people when do they want to travel, you can handle dates. But, what if someone says next Tuesday? Your speech recognition handles that fine but your natural language understanding fails because you did not anticipate that type of question.

Here is another example. If you have the pizza ordering app and you say, “How can I help you?”, and the person answers, “I would like to order one pepperoni pizza,” everyone likes that response. [The user] told you what they wanted to order and the quantity. What if someone said, “I want to order some pizzas”? They haven’t told you anything and then you need to ask them additional questions to find out what they would like to order.

We need to design for how people talk and not how we want them to talk. When you put things out there in the real world you find that people speak very differently about things than you expect.

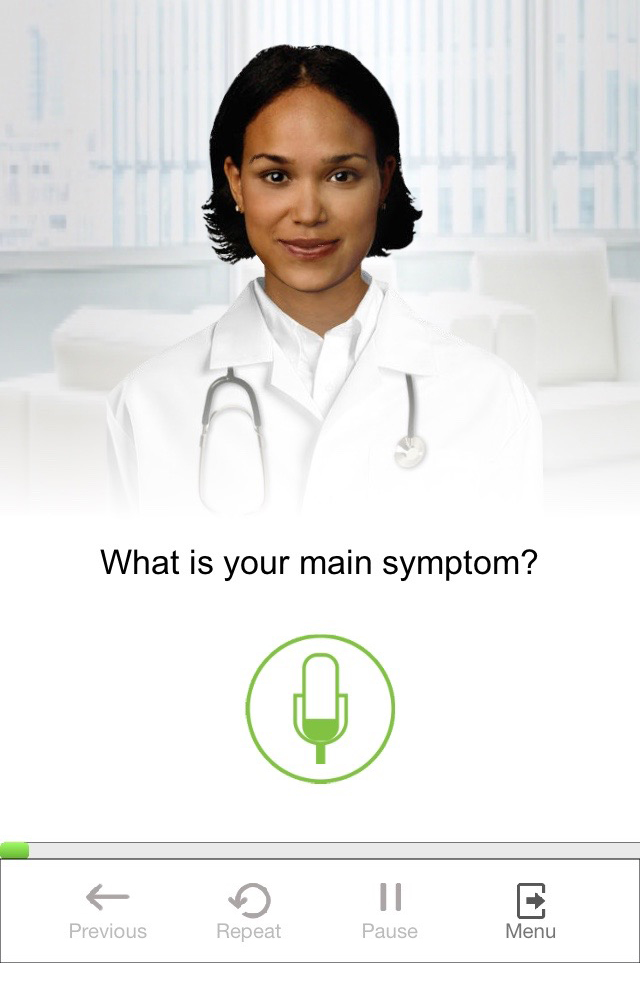

A screenshot of Sense.ly’s avatar, Molly.

Tell us about Sense.ly.

Pearl: Sense.ly has been around for about three years. The purpose is to help people manage their health care and help clinicians monitor and help their patients. We chose to have an avatar because there has been research showing that people will engage more with an avatar. They will answer more questions than clicking buttons and even be more truthful. In healthcare this really matters. We have some patients who get really attached to the avatar. There is a daily check-in and we have a good compliance rate because people feel accountable to the avatar. Some patients will apologize to the avatar if they miss a check-in. They will share information about their day. This is important to compliance.

Were there any mistaken assumptions you made that were corrected form the original solution?

Pearl: We have a lot of older users and there are certain conventions with mobile apps that a lot of younger users are used to. For example, scrolling to see more menu options. A lot of older users don’t know this and they aren’t as comfortable exploring with their app. Certain UI elements we have to make more obvious for the older users.

When it comes to Molly (the virtual nurse avatar), how much is voice versus manual input?

Pearl: One of the philosophies we have is to let the user choose their mode of interaction. There is a start-up sequence and there are a series of actions. During that engagement the user can interact in a variety of ways. Users can always speak. There might be a yes / no question and we will have both buttons on the screen so you can tap the button or talk.

What else is unique about Molly?

Pearl: One of the things we are doing that a lot of people are not doing right now is a continuous conversation. Alexa or Siri are responding to commands. Our solution is not about tap to speak. It is turn taking. It is a back and forth, more like a human-to-human interaction. You are not there saying, “Alexa,” every time you want to continue the conversation.

Tell me your thoughts on Alexa.

Pearl: I built an Alexa skill (Daily Wellness Check) for fun. It represented one of our daily wellness checks [for Sense.ly]. I learned that the way the Echo thinks about voice user interactions are different from a Sensely model. It is designed more for simple interactions. You can make a request or two. It is not a continuous conversation.

Do you have any final pointers for Voice UX designers?

Pearl: Yes. Voice user interactions are not appropriate for all scenarios. In the workplace, everyone can’t be talking to their computers at the same time. You also have to consider if privacy is an issue. I can’t talk about patient records when on the train. Sometimes voice interfaces are great, but not always.

To learn more, get your copy of Cathy’s book, Designing for Voice User Interfaces, here or follow Cathy on Twitter here.

Follow @bretkinsella